Jetson Orin部署NemoClaw避坑教程|踩中这3个坑,直接装不上!

Jetson Orin部署NemoClaw避坑教程|踩中这3个坑,直接装不上!

GPUS Lady

发布于 2026-03-27 13:22:19

发布于 2026-03-27 13:22:19

一、前置说明

1.1 实验环境要求

本教程专为NVIDIA Jetson Orin系列开发板定制,严格适配以下系统版本,环境不匹配会导致部署失败:

Component | Version |

|---|---|

Board | NVIDIA Jetson Orin |

JetPack | 6.2.2 (L4T R36.5.0) |

Kernel | 5.15.185-tegra |

OS | Ubuntu 22.04.5 LTS (aarch64) |

Docker | with iptables-legacy needed |

OpenShell | 0.0.13 |

Node.js | v22.22.1 |

1.2 核心问题预警

Jetson Orin默认内核不兼容NemoClaw官方安装脚本,直接运行会触发3类致命错误,必须先修复再执行安装,问题详情及解决方案如下:

问题1:Tegra内核不支持iptables nf_tables:OpenShell集群镜像默认用iptables v1.8.10 (nf_tables)模式,Tegra 5.15内核模块缺失,导致k3s崩溃报错;

iptables v1.8.10 (nf_tables): RULE_INSERT failed (No such file or directory)

Extension conntrack revision 0 not supported, missing kernel module?修复方案:将集群镜像粘贴到使用iptables-legacy运行安装程序之前

问题2:若无 br_netfilter 内核模块,k3s 集群内 Pod 间的桥接流量会绕过 iptables 规则。这会导致 Kubernetes 的 ClusterIP 服务路由失效,域名解析(DNS)功能尤其会受影响。沙箱容器将陷入反复崩溃重启,报错信息如下:

failed to connect to OpenShell server: dns error: Temporary failure in name resolution根本原因:DNAT 会改写出站数据包的目的地址,但在未加载br_netfilter 模块时,响应数据包会以二层 (L2) 模式流经 Linux 网桥,从而跳过连接跟踪 (conntrack) 中的反向 NAT 解析。客户端收到来源 IP 不符的响应,随即丢弃数据包。

修复方案:加载 br_netfilter 内核模块,并开启 bridge-nf-call-iptables 参数。

问题3:Ollama 仅监听本地地址 127.0.0.1

如果使用本地 Ollama 进行推理,它默认监听 127.0.0.1:11434,该地址无法从 OpenShell 沙箱容器内部访问。初始化流程会失败,报错如下:

containers cannot reach http://host.openshell.internal:11434容器内无法访问本地推理服务,安装流程卡死;

修复方案:配置 Ollama 监听 0.0.0.0:11434

二、前置准备(Step 0)

执行安装前,先完成依赖工具、Docker环境和GPU容器 runtime 配置,这是NemoClaw正常运行的基础。

1.安装curl工具

curl用于拉取在线脚本和镜像密钥,执行以下命令安装:

sudo apt install curl2. 安装Docker引擎

先卸载旧版Docker相关组件,再配置官方源安装最新版:

卸载旧版本Docker组件sudo apt-get remove docker docker-engine docker.io containerd runc

添加 Docker 官方 GPG 密钥及软件源仓库:

sudo apt-get update

sudo apt-get install ca-certificates curl

sudo install -m 0755 -d /etc/apt/keyrings

sudo curl -fsSL https://download.docker.com/linux/ubuntu/gpg -o /etc/apt/keyrings/docker.asc

sudo chmod a+r /etc/apt/keyrings/docker.asc

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/ubuntu \

$(. /etc/os-release && echo "$VERSION_CODENAME") stable" | \

sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt-get update

sudo apt-get install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

3. 安装NVIDIA容器工具包

curl -fsSL https://nvidia.github.io/libnvidia-container/gpgkey \

| sudo gpg --dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg

curl -s -L https://nvidia.github.io/libnvidia-container/stable/deb/nvidia-container-toolkit.list \

| sed 's#deb https://#deb [signed-by=/usr/share/keyrings/nvidia-container-toolkit-keyring.gpg] https://#g' \

| sudo tee /etc/apt/sources.list.d/nvidia-container-toolkit.list

sudo apt-get update

sudo apt-get install -y nvidia-container-toolkit

为所有运行时环境配置工具包

sudo nvidia-ctk runtime configure --runtime=docker

sudo nvidia-ctk runtime configure --runtime=containerd

sudo nvidia-ctk runtime configure --runtime=crio

sudo systemctl restart docker

sudo systemctl restart containerd

sudo systemctl restart crio

4.将用户添加到 Docker 组

sudo chmod 666 /var/run/docker.sock

sudo groupadd docker

sudo usermod -aG docker $USER

newgrp docker

5.将 NVIDIA 设置为默认的 Docker 运行时

这需要让NVCC编译器和GPU在使用期间可用

docker build运营。编辑/etc/docker/daemon.json:

sudo nano /etc/docker/daemon.json

将内容设置为:

{

"runtimes": {

"nvidia": {

"path": "nvidia-container-runtime",

"runtimeArgs": []

}

},

"default-runtime": "nvidia"

}

然后重新配置并重新启动:

sudo nvidia-ctk runtime configure --runtime=docker

sudo nvidia-ctk runtime configure --runtime=containerd

sudo nvidia-ctk runtime configure --runtime=crio

sudo systemctl restart docker

sudo systemctl restart containerd

sudo systemctl restart crio

sudo systemctl enable docker.service

sudo systemctl enable containerd.service

三、NemoClaw核心部署步骤

严格按照以下顺序执行,修复前置问题后再运行官方安装脚本。

Step 1 加载br_netfilter内核模块

sudo modprobe br_netfilter

sudo sysctl -w net.bridge.bridge-nf-call-iptables=1使其在重启过程中持续运行:

echo br_netfilter | sudo tee /etc/modules-load.d/k8s.conf

echo 'net.bridge.bridge-nf-call-iptables = 1' | sudo tee /etc/sysctl.d/k8s.confStep 2 将OpenShell集群镜像粘贴到使用iptables-legacy

安装程序会拉取 ghcr.io/nvidia/openshell/cluster:0.0.13 镜像并直接使用。我们将对该镜像进行原地补丁修复,确保安装程序能加载修复后的版本。

IMAGE_NAME="ghcr.io/nvidia/openshell/cluster:0.0.13"

docker run --entrypoint sh --name fix-iptables "$IMAGE_NAME" -c '

update-alternatives --set iptables /usr/sbin/iptables-legacy

update-alternatives --set ip6tables /usr/sbin/ip6tables-legacy

ln -sf /usr/sbin/iptables-legacy /usr/sbin/iptables

ln -sf /usr/sbin/ip6tables-legacy /usr/sbin/ip6tables

iptables --version

'

docker commit \

--change 'ENTRYPOINT ["/usr/local/bin/cluster-entrypoint.sh"]' \

fix-iptables "$IMAGE_NAME"

docker rm fix-iptables你应该会在输出结果中看到 iptables v1.8.10 (legacy)

重要提示:如果安装程序重新拉取镜像(例如清理后重试安装),本次补丁将会丢失。每次运行安装程序前,请务必重新执行此步骤。

步骤三:配置Ollama监听地址(本地推理必做)

若使用NVIDIA云端推理可跳过此步骤;本地部署Ollama需修改监听地址,让容器可访问:

sudo mkdir -p /etc/systemd/system/ollama.service.d

echo -e '[Service]\nEnvironment="OLLAMA_HOST=0.0.0.0:11434"' \

| sudo tee /etc/systemd/system/ollama.service.d/override.conf

sudo systemctl daemon-reload

sudo systemctl restart ollama验证:

ss -tlnp | grep 11434

# Should show 0.0.0.0:11434, NOT 127.0.0.1:11434Step 4 执行NemoClaw官方安装脚本

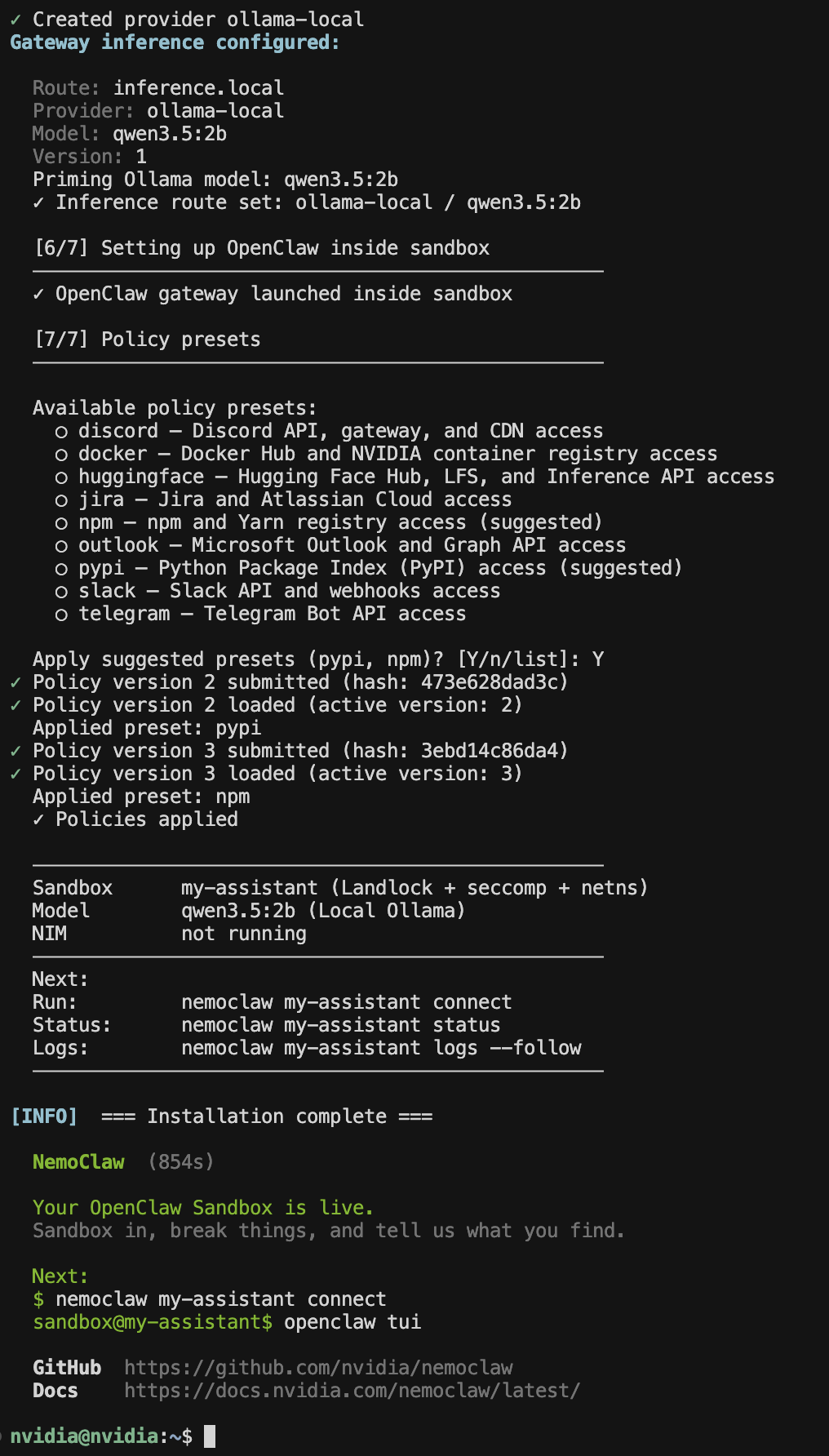

curl -fsSL https://www.nvidia.com/nemoclaw.sh | bash安装过程交互提示(按指引选择即可):

- Sandbox名称:默认my-assistant,可自定义

- 推理选项:选择2(本地Ollama推理)

- Ollama模型:选择适配Jetson的轻量模型(如qwen3.5:2b)

耗时提示:首次构建Docker镜像需约10分钟,后续因缓存加速会大幅缩短。

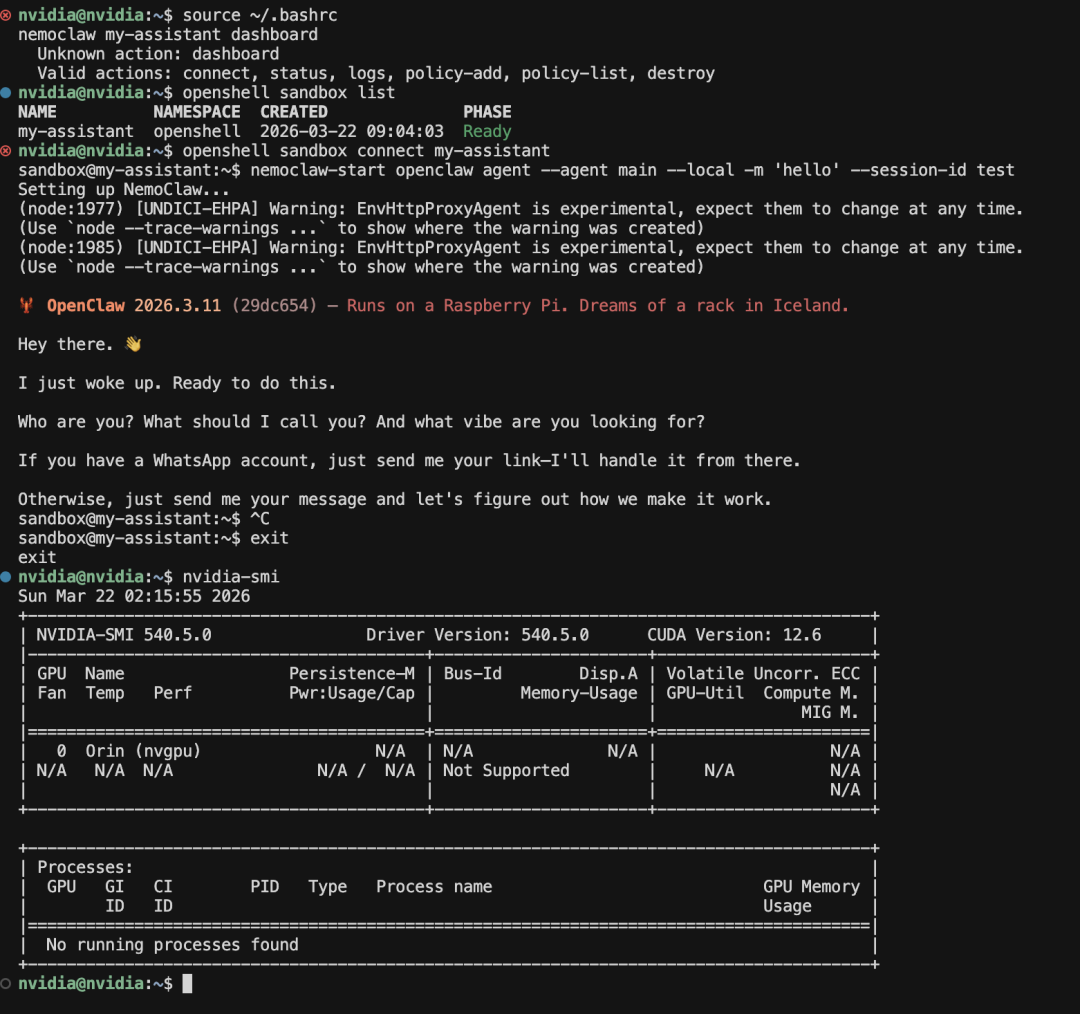

四、部署验证

安装完成后,通过以下方式验证服务是否正常运行:

- Web仪表盘访问:浏览器打开 http://127.0.0.1:18789/,能正常加载页面即成功

- OpenShell网关访问:访问 https://127.0.0.1:8080,服务可正常响应

五、常见故障排查

5.1 检查Sandbox Pod健康状态

docker exec openshell-cluster-nemoclaw kubectl get pods -n openshell5.2 查看Pod运行日志(定位崩溃原因)docker exec openshell-cluster-nemoclaw kubectl logs -n openshell my-assistant5.3 测试集群DNS解析 --namespace=openshell \

--image=rancher/mirrored-library-busybox:1.37.0 \

--restart=Never \

-- nslookup openshell.openshell.svc.cluster.local

sleep 10

docker exec openshell-cluster-nemoclaw kubectl logs -n openshell dns-test

docker exec openshell-cluster-nemoclaw kubectl delete pod -n openshell dns-test5.4 校验iptables模式docker exec openshell-cluster-nemoclaw iptables --version

# Must say "(legacy)", NOT "(nf_tables)"5.5 校验br_netfilter模块状态lsmod | grep br_netfilter

cat /proc/sys/net/bridge/bridge-nf-call-iptables

# Must output: 15.6 彻底清理重装部署失败需清空环境后重新操作:

# Stop and remove everything

source ~/.bashrc

nemoclaw destroy 2>/dev/null

docker rm -f openshell-cluster-nemoclaw 2>/dev/null

docker volume prune -f

# Then re-run from Step 2 above

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2026-03-22,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录