AA-CLIP | 零样本异常检测从训练到部署

AA-CLIP | 零样本异常检测从训练到部署

OpenCV学堂

发布于 2026-04-02 20:23:58

发布于 2026-04-02 20:23:58

AA-CLIP- 两阶段异常感知CLIP,是最新的零样本异常检测模型,支持文本与图像输入编码,特征比对实现工业与医疗图像的异常分类与分割。官方地址如下:

https://github.com/Mwxinnn/AA-CLIPAA-CLIP训练

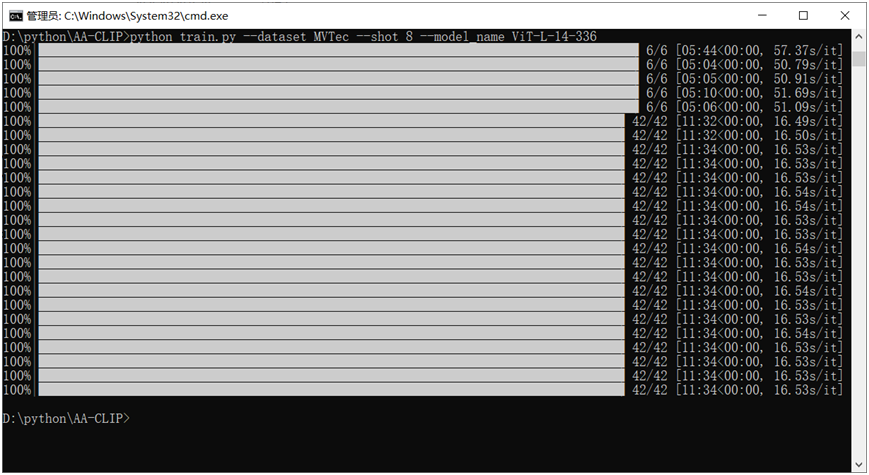

说实话官方github上面的代码写的挺好的,基本没有什么坑,可以直接运行训练,我用我自己的数据集,直接训练,采用小样本模式,八个样本训练,命令行如下:

训练日志如下:

INFO:__main__:args: {'model_name': 'ViT-L-14-336', 'img_size': 518, 'surgery_until_layer': 20, 'relu': False, 'dataset': 'MVTec', 'training_mode': 'few_shot', 'shot': 8, 'text_batch_size': 16, 'image_batch_size': 2, 'text_epoch': 5, 'image_epoch': 20, 'text_lr': 1e-05, 'image_lr': 0.0005, 'criterion': ['dice_loss', 'focal_loss'], 'seed': 111, 'save_path': 'ckpt/baseline', 'text_norm_weight': 0.1, 'text_adapt_weight': 0.1, 'image_adapt_weight': 0.1, 'text_adapt_until': 3, 'image_adapt_until': 6}

INFO:root:Loading pretrained ViT-L-14-336 from OpenAI.

INFO:root:Resizing position embedding grid-size from (24, 24) to (37, 37)

INFO:root:Loading pretrained ViT-L-14-336 from OpenAI.

INFO:root:Resizing position embedding grid-size from (24, 24) to (37, 37)

INFO:__main__:loading dataset ...

INFO:__main__:loading image adaptation dataset ...

INFO:__main__:training text epoch 0:

INFO:__main__:loss: 1.4636036157608032

INFO:__main__:training text epoch 1:

INFO:__main__:loss: 1.219490071137746

INFO:__main__:training text epoch 2:

INFO:__main__:loss: 1.2085641225179036

INFO:__main__:training text epoch 3:

INFO:__main__:loss: 1.2048145135243733

INFO:__main__:training text epoch 4:

INFO:__main__:loss: 1.1965291897455852

INFO:__main__:training image epoch 0:

INFO:__main__:loss: 3.6282341423488798

INFO:__main__:training image epoch 1:

INFO:__main__:loss: 2.6655399345216297

INFO:__main__:training image epoch 2:

INFO:__main__:loss: 2.4324947538829984

INFO:__main__:training image epoch 3:

INFO:__main__:loss: 2.1065715295927867

INFO:__main__:training image epoch 4:

INFO:__main__:loss: 1.9446473377091544

INFO:__main__:training image epoch 5:

INFO:__main__:loss: 1.8010127643744152

INFO:__main__:training image epoch 6:

INFO:__main__:loss: 1.7255745700427465

INFO:__main__:training image epoch 7:

INFO:__main__:loss: 1.737629310006187

INFO:__main__:training image epoch 8:

INFO:__main__:loss: 1.6446210316249303

INFO:__main__:training image epoch 9:

INFO:__main__:loss: 1.5891813351994468

INFO:__main__:training image epoch 10:

INFO:__main__:loss: 1.556674992754346

INFO:__main__:training image epoch 11:

INFO:__main__:loss: 1.432623612029212

INFO:__main__:training image epoch 12:

INFO:__main__:loss: 1.5059531927108765

INFO:__main__:training image epoch 13:

INFO:__main__:loss: 1.4267199337482452

INFO:__main__:training image epoch 14:

INFO:__main__:loss: 1.3787336079847246

INFO:__main__:training image epoch 15:

INFO:__main__:loss: 1.2949471984590804

INFO:__main__:training image epoch 16:

INFO:__main__:loss: 1.3198628212724413

INFO:__main__:training image epoch 17:

INFO:__main__:loss: 1.2334311718032474

INFO:__main__:training image epoch 18:

INFO:__main__:loss: 1.2762719293435414

INFO:__main__:training image epoch 19:

INFO:__main__:loss: 1.1888043440523601推理测试

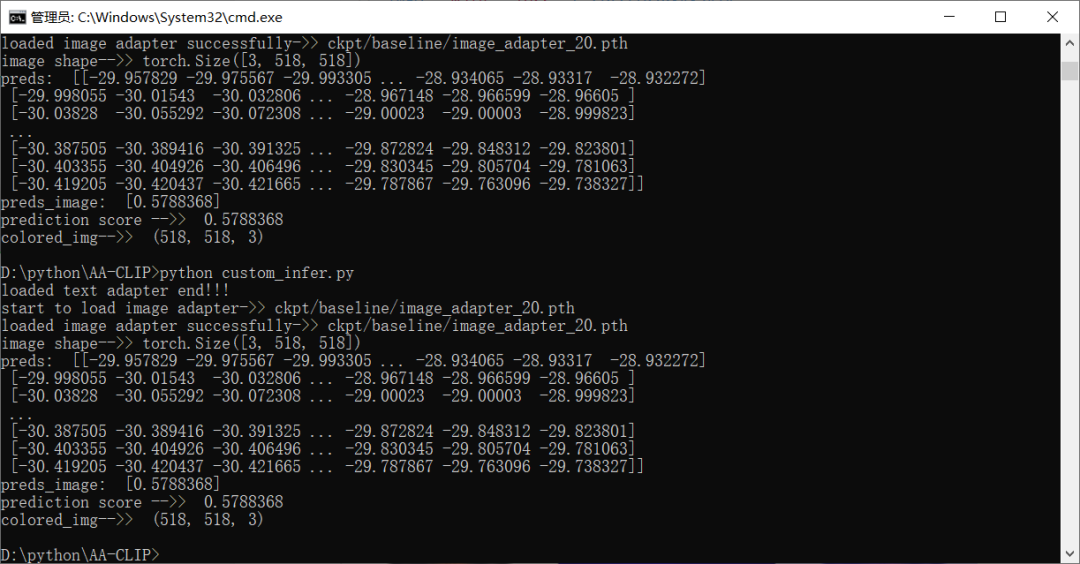

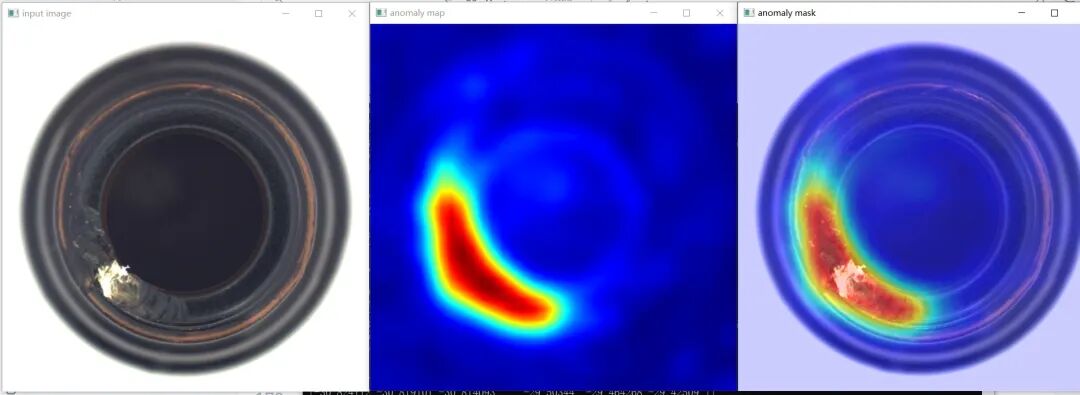

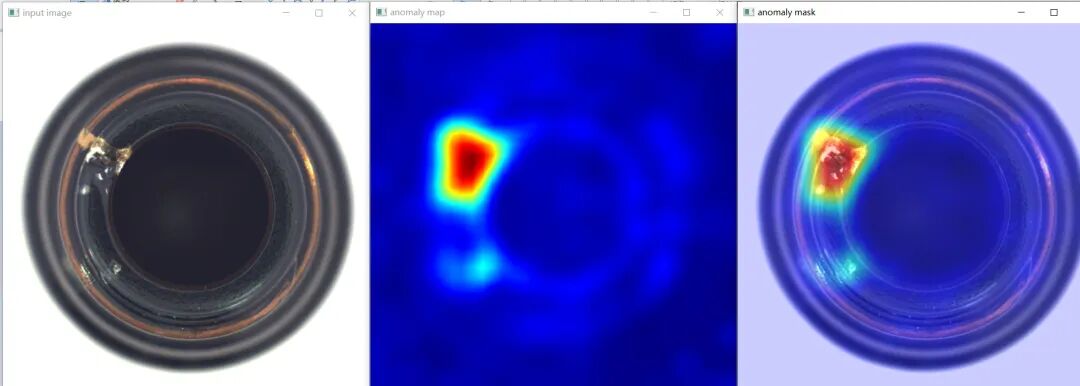

训练完以后,官方的代码test.py只支持数据集评估跟eval metrix计算,为了推理任意单张图像,我只能自己一通修改代码,改了一天改好

了。

可以推理单张图像书anomalymap跟mask,运行结果如下:

显示代码如下:

gray_mask = (pixel_preds * 255).astype(np.uint8)

print("prediction score -->> ", preds_image[0])

colored_img = cv.applyColorMap(gray_mask, cv.COLORMAP_JET)

ch, cw, cc = colored_img.shape

print("colored_img-->> ", colored_img.shape)

src = cv.imread(image_file, cv.IMREAD_COLOR)

resized = cv.resize(src, (ch, cw))

anomaly_mask = cv.addWeighted(resized, 0.8, colored_img, 0.6, 0)

cv.imshow("input image", resized)

cv.imshow("anomaly map", colored_img)

cv.imshow("anomaly mask", anomaly_mask)

cv.waitKey(0)

cv.destroyAllWindows()学习OpenVINO2025

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-08-20,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读