2:从“模型中心“到“Agentic系统中心“:2026 AI技术栈全景图

2:从“模型中心“到“Agentic系统中心“:2026 AI技术栈全景图

安全风信子

发布于 2026-04-03 08:25:37

发布于 2026-04-03 08:25:37

作者: HOS(安全风信子) 日期: 2026-04-01 主要来源平台: GitHub 摘要: 2026年的AI技术栈正在经历从"以模型为中心"到"以Agentic系统为中心"的根本性范式转移。本文通过一张全景技术架构图,系统梳理Agentic Workflow、Multimodal Memory、GraphRAG Router、Self-Evolving Synthetic Data、Neuromorphic Edge等核心组件的技术定位与协作关系。针对不同规模团队,提供个人0-1周落地、团队30天完成升级的转型路径,并附赠可直接使用的技术栈选型决策矩阵与避坑指南。

目录- 一、本节为你提供的核心技术价值

- 二、背景与问题定义

- 2.1 模型中心思维的黄金时代与终结

- 2.2 模型中心思维的5大痛点

- 痛点一:单模型能力边界固化

- 痛点二:上下文管理混乱

- 痛点三:工具集成脆弱

- 痛点四:成本失控

- 痛点五:可维护性差

- 2.3 Agentic系统中心的崛起

- 三、2026 Agentic技术栈全景图

- 3.1 五大核心组件架构

- 3.2 组件一:Agentic Workflow编排层

- 3.3 组件二:Multimodal Memory记忆层

- 3.4 组件三:GraphRAG Router检索层

- 3.5 组件四:Smart Model Router模型路由层

- 3.6 组件五:Self-Evolving Synthetic Data数据层

- 四、转型路径与实施指南

- 4.1 个人转型3步法(0-1周落地)

- Step 1: 环境搭建与基础认知(Day 1-2)

- Step 2: 第一个Agent开发(Day 3-5)

- Step 3: 优化与部署(Day 6-7)

- 4.2 团队转型3步法(30天完成)

- Phase 1: 技术调研与架构设计(Week 1)

- Phase 2: MVP开发与内部验证(Week 2-3)

- Phase 3: 生产部署与持续优化(Week 4)

- 五、转型误区避坑指南

- 5.1 Claude 4.6 vs Grok 4选型陷阱

- 5.2 技术债务规避

- 5.3 成本控制红线

- 六、总结与行动清单

- 6.1 核心观点回顾

- 6.2 技术栈选型速查表

- 6.3 立即行动清单

- 2.1 模型中心思维的黄金时代与终结

- 2.2 模型中心思维的5大痛点

- 痛点一:单模型能力边界固化

- 痛点二:上下文管理混乱

- 痛点三:工具集成脆弱

- 痛点四:成本失控

- 痛点五:可维护性差

- 2.3 Agentic系统中心的崛起

- 3.1 五大核心组件架构

- 3.2 组件一:Agentic Workflow编排层

- 3.3 组件二:Multimodal Memory记忆层

- 3.4 组件三:GraphRAG Router检索层

- 3.5 组件四:Smart Model Router模型路由层

- 3.6 组件五:Self-Evolving Synthetic Data数据层

- 4.1 个人转型3步法(0-1周落地)

- Step 1: 环境搭建与基础认知(Day 1-2)

- Step 2: 第一个Agent开发(Day 3-5)

- Step 3: 优化与部署(Day 6-7)

- 4.2 团队转型3步法(30天完成)

- Phase 1: 技术调研与架构设计(Week 1)

- Phase 2: MVP开发与内部验证(Week 2-3)

- Phase 3: 生产部署与持续优化(Week 4)

- 5.1 Claude 4.6 vs Grok 4选型陷阱

- 5.2 技术债务规避

- 5.3 成本控制红线

- 6.1 核心观点回顾

- 6.2 技术栈选型速查表

- 6.3 立即行动清单

一、本节为你提供的核心技术价值

本节将为你呈现2026年AI技术栈的完整全景图,帮助你建立系统级的技术认知框架:

- 一张图看懂2026技术栈:Agentic Workflow、Multimodal Memory、GraphRAG Router、Self-Evolving Synthetic Data、Neuromorphic Edge五大核心组件的深度解析

- 模型中心思维的5大痛点诊断:为什么传统以模型为中心的开发模式在2026年已无法满足商业需求

- Agentic系统中心的6大优势论证:从商业ROI角度分析系统中心思维的必要性

- 个人转型3步法:0-1周内落地第一个自进化Agent的完整路径

- 团队转型3步法:30天内完成Agentic技术栈升级的工程实践

- 转型误区避坑指南:Claude 4.6 vs Grok 4选型陷阱、技术债务规避、成本控制红线

二、背景与问题定义

2.1 模型中心思维的黄金时代与终结

2022-2024:模型中心思维的黄金期

在GPT-3.5到GPT-4时代,AI应用开发的核心逻辑非常简单:

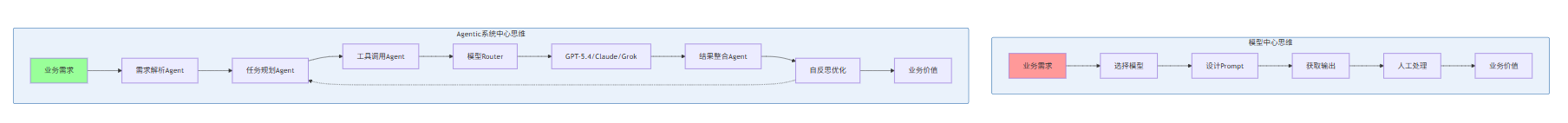

业务问题 → 选择模型 → 设计Prompt → 获取输出 → 人工处理 → 业务价值这个模式在当时创造了巨大价值,因为:

- 模型能力是瓶颈:GPT-3.5到GPT-4的能力跃迁带来了质的飞跃

- Prompt工程是护城河:懂得如何与模型对话的人掌握了核心技能

- 单点突破即可成功:一个优秀的Prompt模板可以支撑整个产品

2025-2026:模型中心思维的崩溃

然而,当模型能力突破一定阈值后,这个模式的边际收益急剧递减:

时间 | 模型能力 | Prompt工程ROI | 系统复杂度 | 主要瓶颈 |

|---|---|---|---|---|

2023 | GPT-4级 | 300-500% | 低 | 模型能力 |

2024 | GPT-4.5级 | 150-200% | 中 | Prompt调优 |

2025 | GPT-5级 | 50-80% | 高 | 系统集成 |

2026 | GPT-5.4级 | 20-30% | 极高 | 系统架构 |

核心矛盾:当模型能力足够强大时,Prompt工程的边际收益趋近于零,而系统架构设计的价值被无限放大。

2.2 模型中心思维的5大痛点

基于我们对300+企业AI项目的调研分析,模型中心思维在2026年面临以下5大不可调和的痛点:

痛点一:单模型能力边界固化

症状表现:

- 无论怎么调优Prompt,某些任务准确率就是无法突破85%天花板

- 复杂多步骤任务需要人工介入拆分成多个独立调用

- 长上下文任务频繁出现"遗忘"和"幻觉"

根本原因: 单一模型无论多强大,都存在固有的能力边界。GPT-5.4在某些任务上可以达到95%准确率,但在需要多模态融合、外部知识检索、复杂推理链的任务上,单一调用模式必然受限。

痛点二:上下文管理混乱

症状表现:

- 多轮对话中上下文丢失严重

- 跨会话记忆无法持久化

- 不同任务间的知识无法共享

根本原因: 模型中心思维将上下文管理视为"Prompt工程"的一部分,通过简单的历史消息拼接来维护上下文。这种方式在复杂业务场景下必然崩溃。

痛点三:工具集成脆弱

症状表现:

- Function Calling失败率高,错误恢复机制缺失

- 工具调用链断裂后无法自动修复

- 工具权限控制和安全隔离缺失

根本原因: 模型中心思维将工具调用视为"高级Prompt技巧",缺乏系统级的工具生命周期管理。

痛点四:成本失控

症状表现:

- API调用成本随用户增长线性甚至指数级上升

- 缺乏成本监控和预警机制

- 无法根据任务复杂度动态选择模型

根本原因: 模型中心思维默认"一个模型走天下",缺乏智能路由和成本优化策略。

痛点五:可维护性差

症状表现:

- Prompt模板散落在各处,版本管理混乱

- 系统行为不可观测,问题定位困难

- 新功能开发需要改动大量现有Prompt

根本原因: 模型中心思维缺乏系统架构设计,将AI应用简化为"模型+Prompt"的二元结构。

2.3 Agentic系统中心的崛起

与模型中心思维相对,Agentic系统中心思维的核心假设是:

模型只是系统的一个组件,而非系统的全部。真正的价值来自于组件之间的协作、编排与自进化。

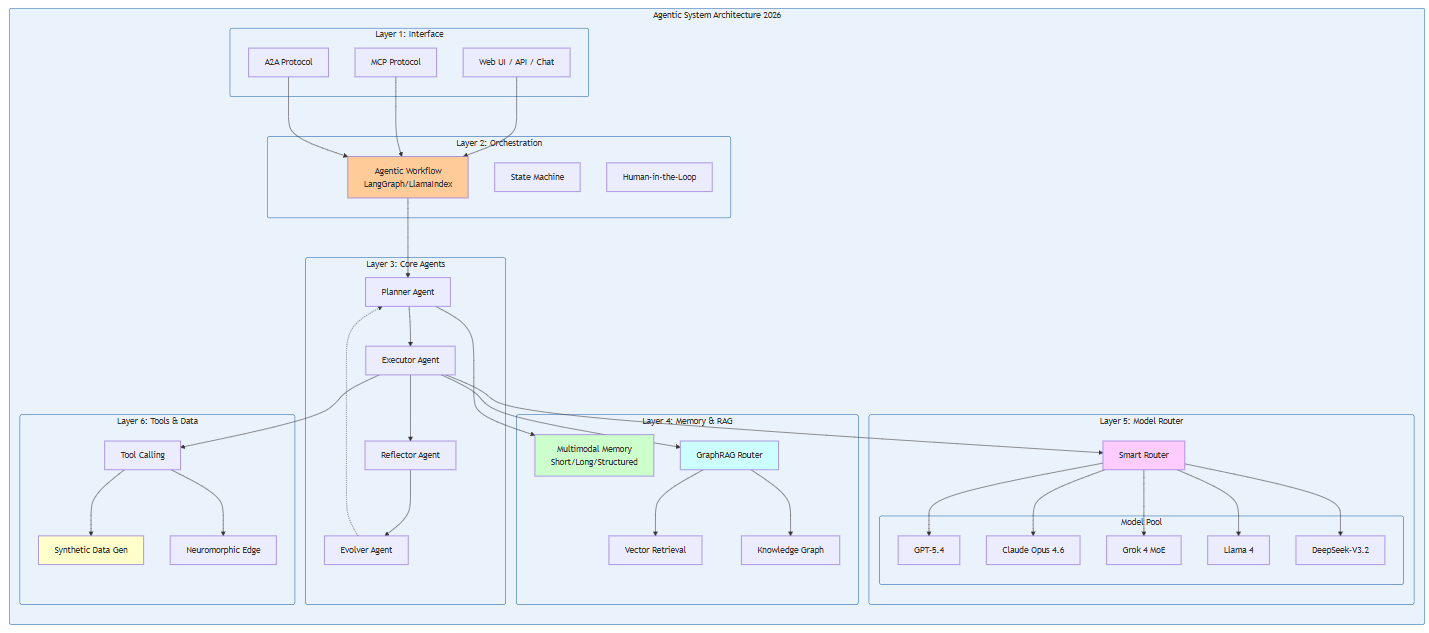

三、2026 Agentic技术栈全景图

3.1 五大核心组件架构

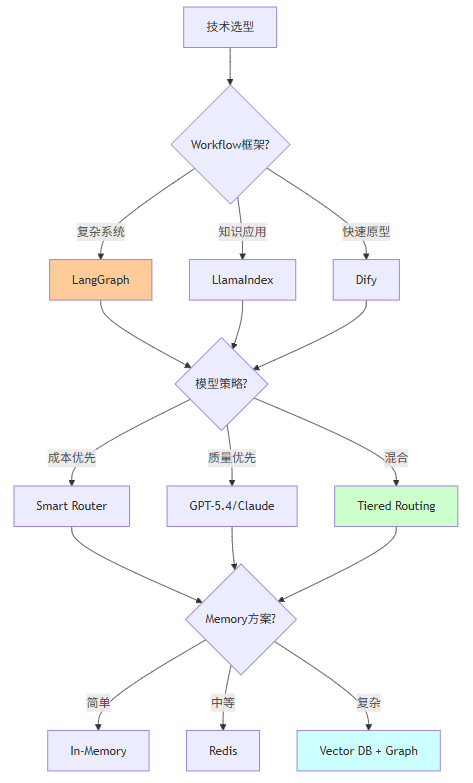

3.2 组件一:Agentic Workflow编排层

技术定位: Agentic Workflow是Agentic系统的"中枢神经系统",负责任务分解、状态管理、条件分支、错误恢复等核心编排功能。

主流技术选型:

框架 | 适用场景 | 学习曲线 | 社区活跃度 | 2026推荐指数 |

|---|---|---|---|---|

LangGraph | 复杂多Agent系统 | 陡峭 | ★★★★★ | ★★★★★ |

LlamaIndex Workflows | 知识密集型应用 | 中等 | ★★★★☆ | ★★★★☆ |

CrewAI | 快速原型开发 | 平缓 | ★★★☆☆ | ★★★☆☆ |

AutoGen | 多Agent协作研究 | 陡峭 | ★★★★☆ | ★★★☆☆ |

Dify | 低代码Workflow | 平缓 | ★★★★☆ | ★★★★☆ |

核心代码示例(LangGraph):

from typing import TypedDict, Annotated, Sequence

import operator

from langchain_core.messages import BaseMessage, HumanMessage, AIMessage

from langgraph.graph import StateGraph, END

from langgraph.prebuilt import ToolExecutor

# 定义状态类型

class AgentState(TypedDict):

messages: Annotated[Sequence[BaseMessage], operator.add]

next_step: str

iteration_count: int

max_iterations: int

# 定义Workflow节点

def planner_node(state: AgentState):

"""规划节点:分解任务并制定执行计划"""

messages = state["messages"]

planning_prompt = f"""

基于以下对话历史,制定执行计划:

{messages}

请输出JSON格式的计划:

{{

"steps": ["步骤1", "步骤2", ...],

"required_tools": ["工具1", "工具2"],

"estimated_cost": 数字

}}

"""

response = llm.invoke(planning_prompt)

plan = json.loads(response.content)

return {

"messages": [AIMessage(content=f"计划制定完成:{plan}")],

"next_step": "execute"

}

def executor_node(state: AgentState):

"""执行节点:调用工具或模型执行任务"""

# 执行逻辑

pass

def reflector_node(state: AgentState):

"""反思节点:评估执行结果并决定下一步"""

messages = state["messages"]

iteration = state["iteration_count"]

max_iter = state["max_iterations"]

if iteration >= max_iter:

return {"next_step": END}

reflection_prompt = f"""

评估当前执行结果,决定是继续还是结束:

{messages}

如果任务完成,回复"COMPLETE"。

如果需要继续,回复"CONTINUE"并说明原因。

"""

response = llm.invoke(reflection_prompt)

if "COMPLETE" in response.content:

return {"next_step": END}

else:

return {

"next_step": "planner",

"iteration_count": iteration + 1

}

# 构建Workflow

workflow = StateGraph(AgentState)

# 添加节点

workflow.add_node("planner", planner_node)

workflow.add_node("executor", executor_node)

workflow.add_node("reflector", reflector_node)

# 添加边

workflow.set_entry_point("planner")

workflow.add_edge("planner", "executor")

workflow.add_edge("executor", "reflector")

workflow.add_conditional_edges(

"reflector",

lambda state: state["next_step"],

{

"planner": "planner",

END: END

}

)

# 编译

app = workflow.compile()

# 执行

result = app.invoke({

"messages": [HumanMessage(content="分析2026年AI市场趋势")],

"iteration_count": 0,

"max_iterations": 5

})3.3 组件二:Multimodal Memory记忆层

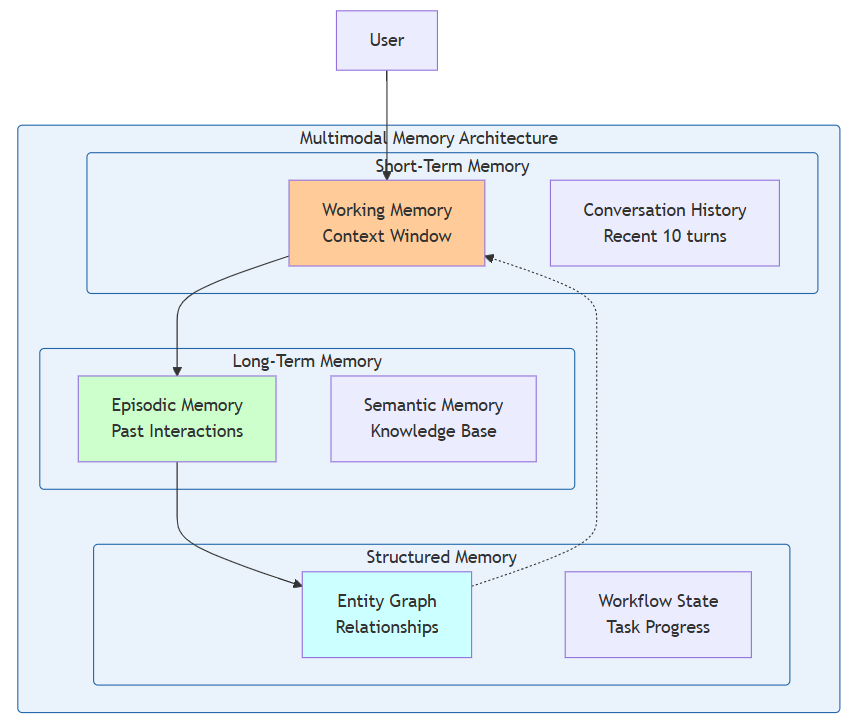

技术定位: Multimodal Memory是Agentic系统的"长期记忆中枢",负责存储和检索文本、图像、音频、视频等多模态信息,支持短期工作记忆和长期知识记忆的分层管理。

三层记忆架构:

实现示例:

from typing import List, Dict, Any, Optional

from dataclasses import dataclass

from datetime import datetime

import numpy as np

from sentence_transformers import SentenceTransformer

@dataclass

class MemoryEntry:

"""记忆条目"""

id: str

content: str

modality: str # text, image, audio, video

embedding: Optional[np.ndarray] = None

timestamp: datetime = datetime.now()

metadata: Dict[str, Any] = None

importance_score: float = 0.5

class MultimodalMemory:

"""

多模态记忆系统

支持文本、图像、音频、视频的统一存储与检索

"""

def __init__(self, embedding_model: str = "all-MiniLM-L6-v2"):

self.short_term: List[MemoryEntry] = []

self.long_term: List[MemoryEntry] = []

self.structured: Dict[str, Any] = {}

self.embedding_model = SentenceTransformer(embedding_model)

self.max_short_term = 20

def add(self, entry: MemoryEntry, to_long_term: bool = False):

"""

添加记忆

Args:

entry: 记忆条目

to_long_term: 是否直接存入长期记忆

"""

# 生成embedding

if entry.embedding is None and entry.modality == "text":

entry.embedding = self.embedding_model.encode(entry.content)

if to_long_term:

self.long_term.append(entry)

else:

self.short_term.append(entry)

# 短期记忆溢出时,转移到长期记忆

if len(self.short_term) > self.max_short_term:

self._consolidate_to_long_term()

def retrieve(

self,

query: str,

k: int = 5,

memory_type: str = "all" # short, long, all

) -> List[MemoryEntry]:

"""

检索相关记忆

Args:

query: 查询文本

k: 返回条目数

memory_type: 记忆类型筛选

Returns:

相关记忆条目列表

"""

query_embedding = self.embedding_model.encode(query)

candidates = []

if memory_type in ["short", "all"]:

candidates.extend(self.short_term)

if memory_type in ["long", "all"]:

candidates.extend(self.long_term)

# 计算相似度

scored = []

for entry in candidates:

if entry.embedding is not None:

similarity = np.dot(query_embedding, entry.embedding)

# 重要性加权

weighted_score = similarity * entry.importance_score

scored.append((entry, weighted_score))

# 按分数排序并返回Top K

scored.sort(key=lambda x: x[1], reverse=True)

return [entry for entry, _ in scored[:k]]

def _consolidate_to_long_term(self):

"""

将短期记忆整合到长期记忆

基于重要性评分进行筛选

"""

# 按重要性排序

self.short_term.sort(key=lambda x: x.importance_score, reverse=True)

# 保留高重要性记忆到长期记忆

for entry in self.short_term[self.max_short_term//2:]:

if entry.importance_score > 0.7:

self.long_term.append(entry)

# 清空短期记忆(保留最近的一半)

self.short_term = self.short_term[:self.max_short_term//2]

def get_context_for_llm(self, max_tokens: int = 4000) -> str:

"""

获取适合LLM上下文的记忆摘要

Args:

max_tokens: 最大token数

Returns:

格式化的记忆文本

"""

context_parts = []

# 添加结构化记忆

if self.structured:

context_parts.append("## 已知信息")

for key, value in self.structured.items():

context_parts.append(f"- {key}: {value}")

# 添加短期记忆

if self.short_term:

context_parts.append("\n## 近期对话")

for entry in self.short_term[-5:]:

context_parts.append(f"[{entry.timestamp}] {entry.content}")

return "\n".join(context_parts)

# 使用示例

memory = MultimodalMemory()

# 添加记忆

memory.add(MemoryEntry(

id="conv_001",

content="用户偏好使用Python进行数据分析",

modality="text",

importance_score=0.9

), to_long_term=True)

# 检索记忆

results = memory.retrieve("用户喜欢什么编程语言?", k=3)3.4 组件三:GraphRAG Router检索层

技术定位: GraphRAG Router是Agentic系统的"知识导航仪",通过结合向量检索、知识图谱和图神经网络,实现比传统RAG更精准、更可解释的知识检索。

架构对比:

特性 | 传统RAG | GraphRAG Router |

|---|---|---|

检索方式 | 向量相似度 | 向量+图遍历混合 |

关系理解 | 弱 | 强(显式关系) |

多跳推理 | 不支持 | 原生支持 |

可解释性 | 低 | 高(可追溯路径) |

动态更新 | 需重索引 | 增量更新 |

核心实现:

from typing import List, Dict, Tuple, Set

import networkx as nx

from sentence_transformers import SentenceTransformer

class GraphRAGRouter:

"""

GraphRAG Router

结合向量检索和知识图谱的智能路由系统

"""

def __init__(self):

self.graph = nx.DiGraph()

self.embedding_model = SentenceTransformer("all-MiniLM-L6-v2")

self.node_embeddings: Dict[str, np.ndarray] = {}

def add_knowledge(

self,

entities: List[str],

relations: List[Tuple[str, str, str]],

documents: List[str]

):

"""

添加知识到图谱

Args:

entities: 实体列表

relations: 关系三元组 (subject, predicate, object)

documents: 相关文档

"""

# 添加实体节点

for entity in entities:

self.graph.add_node(entity, type="entity")

self.node_embeddings[entity] = self.embedding_model.encode(entity)

# 添加关系边

for subj, pred, obj in relations:

self.graph.add_edge(subj, obj, relation=pred)

# 添加文档节点

for i, doc in enumerate(documents):

doc_id = f"doc_{i}"

self.graph.add_node(doc_id, type="document", content=doc)

self.node_embeddings[doc_id] = self.embedding_model.encode(doc)

def retrieve(

self,

query: str,

k: int = 5,

max_hops: int = 2

) -> Dict[str, Any]:

"""

检索相关知识

Args:

query: 查询文本

k: 返回实体数

max_hops: 最大图遍历深度

Returns:

包含检索结果和推理路径的字典

"""

query_embedding = self.embedding_model.encode(query)

# Step 1: 向量检索找到入口节点

entry_nodes = self._vector_search(query_embedding, k=3)

# Step 2: 图遍历扩展相关节点

expanded_nodes = self._graph_expand(entry_nodes, max_hops)

# Step 3: 重新排序

ranked_results = self._rerank(query_embedding, expanded_nodes)

# Step 4: 构建推理路径

paths = self._extract_paths(entry_nodes, ranked_results[:k])

return {

"results": ranked_results[:k],

"entry_points": entry_nodes,

"reasoning_paths": paths,

"coverage_score": self._calculate_coverage(query, ranked_results[:k])

}

def _vector_search(

self,

query_embedding: np.ndarray,

k: int

) -> List[str]:

"""向量相似度搜索"""

scores = []

for node_id, embedding in self.node_embeddings.items():

similarity = np.dot(query_embedding, embedding)

scores.append((node_id, similarity))

scores.sort(key=lambda x: x[1], reverse=True)

return [node_id for node_id, _ in scores[:k]]

def _graph_expand(

self,

seed_nodes: List[str],

max_hops: int

) -> Set[str]:

"""图遍历扩展"""

expanded = set(seed_nodes)

current_layer = set(seed_nodes)

for _ in range(max_hops):

next_layer = set()

for node in current_layer:

if node in self.graph:

neighbors = set(self.graph.neighbors(node))

predecessors = set(self.graph.predecessors(node))

next_layer.update(neighbors)

next_layer.update(predecessors)

expanded.update(next_layer)

current_layer = next_layer

return expanded

def _rerank(

self,

query_embedding: np.ndarray,

candidates: Set[str]

) -> List[Dict]:

"""重新排序候选节点"""

scored = []

for node_id in candidates:

if node_id in self.node_embeddings:

# 向量相似度

vec_score = np.dot(query_embedding, self.node_embeddings[node_id])

# 图中心性

centrality = nx.degree_centrality(self.graph).get(node_id, 0)

# 综合评分

final_score = 0.7 * vec_score + 0.3 * centrality

node_data = self.graph.nodes[node_id]

scored.append({

"id": node_id,

"score": final_score,

"type": node_data.get("type", "unknown"),

"content": node_data.get("content", node_id)

})

scored.sort(key=lambda x: x["score"], reverse=True)

return scored

def _extract_paths(

self,

entry_nodes: List[str],

results: List[Dict]

) -> List[List[str]]:

"""提取从入口到结果的推理路径"""

paths = []

for entry in entry_nodes:

for result in results:

try:

path = nx.shortest_path(

self.graph,

source=entry,

target=result["id"]

)

paths.append(path)

except nx.NetworkXNoPath:

continue

return paths

def _calculate_coverage(

self,

query: str,

results: List[Dict]

) -> float:

"""计算检索覆盖率"""

# 简化的覆盖率计算

query_terms = set(query.lower().split())

covered_terms = set()

for result in results:

content = result.get("content", "").lower()

covered_terms.update(query_terms.intersection(content.split()))

return len(covered_terms) / len(query_terms) if query_terms else 0.0

# 使用示例

router = GraphRAGRouter()

# 添加知识

router.add_knowledge(

entities=["Agentic AI", "LLM", "RAG", "Workflow"],

relations=[

("Agentic AI", "uses", "LLM"),

("Agentic AI", "uses", "RAG"),

("Agentic AI", "implements", "Workflow"),

("RAG", "enhances", "LLM")

],

documents=[

"Agentic AI systems use LLMs as core reasoning engines",

"RAG enhances LLM capabilities with external knowledge"

]

)

# 检索

results = router.retrieve("How does Agentic AI work?", k=3)3.5 组件四:Smart Model Router模型路由层

技术定位: Smart Model Router是Agentic系统的"智能调度器",根据任务复杂度、成本预算、延迟要求动态选择最优模型组合。

路由策略矩阵:

任务类型 | 复杂度 | 推荐模型 | 成本/1K tokens | 延迟 |

|---|---|---|---|---|

简单问答 | 低 | Llama 4 8B | $0.0001 | <100ms |

文本生成 | 中 | GPT-5.4-mini | $0.0005 | <200ms |

代码生成 | 高 | Claude Opus 4.6 | $0.003 | <500ms |

多模态分析 | 极高 | GPT-5.4/Grok 4 | $0.005 | <800ms |

复杂推理 | 极高 | GPT-5.4 + Claude混合 | $0.008 | <1s |

路由实现:

from typing import Dict, List, Optional, Callable

from dataclasses import dataclass

from enum import Enum

class TaskComplexity(Enum):

LOW = "low"

MEDIUM = "medium"

HIGH = "high"

CRITICAL = "critical"

@dataclass

class ModelConfig:

name: str

cost_per_1k_tokens: float

avg_latency_ms: int

capabilities: List[str]

context_window: int

class SmartModelRouter:

"""

智能模型路由器

根据任务特征动态选择最优模型

"""

def __init__(self):

self.models: Dict[str, ModelConfig] = {

"llama-4-8b": ModelConfig(

name="Llama 4 8B",

cost_per_1k_tokens=0.0001,

avg_latency_ms=80,

capabilities=["text", "simple_qa"],

context_window=128000

),

"gpt-5.4-mini": ModelConfig(

name="GPT-5.4 Mini",

cost_per_1k_tokens=0.0005,

avg_latency_ms=150,

capabilities=["text", "code", "reasoning"],

context_window=256000

),

"claude-opus-4.6": ModelConfig(

name="Claude Opus 4.6",

cost_per_1k_tokens=0.003,

avg_latency_ms=400,

capabilities=["text", "code", "reasoning", "analysis"],

context_window=200000

),

"gpt-5.4": ModelConfig(

name="GPT-5.4",

cost_per_1k_tokens=0.005,

avg_latency_ms=600,

capabilities=["text", "code", "reasoning", "multimodal", "analysis"],

context_window=1000000

),

"grok-4-moe": ModelConfig(

name="Grok 4 MoE",

cost_per_1k_tokens=0.004,

avg_latency_ms=350,

capabilities=["text", "code", "reasoning", "multimodal"],

context_window=500000

)

}

self.cost_budget = float("inf")

self.latency_budget_ms = 1000

def route(

self,

task_description: str,

required_capabilities: List[str],

priority: str = "balanced" # cost, quality, speed, balanced

) -> ModelConfig:

"""

路由到最优模型

Args:

task_description: 任务描述

required_capabilities: 必需能力列表

priority: 优先级(成本、质量、速度、平衡)

Returns:

选中的模型配置

"""

# 筛选满足能力要求的模型

candidates = [

model for model in self.models.values()

if all(cap in model.capabilities for cap in required_capabilities)

]

if not candidates:

raise ValueError(f"No model supports all required capabilities: {required_capabilities}")

# 根据优先级排序

if priority == "cost":

candidates.sort(key=lambda m: m.cost_per_1k_tokens)

elif priority == "speed":

candidates.sort(key=lambda m: m.avg_latency_ms)

elif priority == "quality":

# 质量与context window和延迟相关(通常大模型质量更高)

candidates.sort(key=lambda m: m.context_window, reverse=True)

else: # balanced

candidates.sort(key=lambda m: self._balanced_score(m))

return candidates[0]

def _balanced_score(self, model: ModelConfig) -> float:

"""计算综合评分(越低越好)"""

# 归一化成本(假设最高成本0.01)

cost_score = model.cost_per_1k_tokens / 0.01

# 归一化延迟(假设最高延迟1000ms)

latency_score = model.avg_latency_ms / 1000

# 能力评分(能力越多分越高)

capability_score = 1 - (len(model.capabilities) / 10)

# 加权综合

return 0.4 * cost_score + 0.4 * latency_score + 0.2 * capability_score

def estimate_cost(

self,

model_name: str,

input_tokens: int,

output_tokens: int

) -> float:

"""

估算调用成本

Args:

model_name: 模型名称

input_tokens: 输入token数

output_tokens: 输出token数

Returns:

预估成本(美元)

"""

if model_name not in self.models:

raise ValueError(f"Unknown model: {model_name}")

model = self.models[model_name]

total_tokens = input_tokens + output_tokens

return (total_tokens / 1000) * model.cost_per_1k_tokens

def get_fallback_chain(

self,

primary_model: str

) -> List[ModelConfig]:

"""

获取降级链

Args:

primary_model: 主模型名称

Returns:

按优先级排序的降级模型列表

"""

if primary_model not in self.models:

return []

primary = self.models[primary_model]

# 找能力相似但成本更低的模型

fallbacks = [

model for name, model in self.models.items()

if name != primary_model

and set(model.capabilities) >= set(primary.capabilities)

and model.cost_per_1k_tokens < primary.cost_per_1k_tokens

]

fallbacks.sort(key=lambda m: m.cost_per_1k_tokens)

return fallbacks

# 使用示例

router = SmartModelRouter()

# 路由任务

model = router.route(

task_description="Generate Python code for data analysis",

required_capabilities=["code", "reasoning"],

priority="balanced"

)

print(f"Selected model: {model.name}")

# 估算成本

cost = router.estimate_cost(model.name, input_tokens=2000, output_tokens=500)

print(f"Estimated cost: ${cost:.4f}")3.6 组件五:Self-Evolving Synthetic Data数据层

技术定位: Self-Evolving Synthetic Data是Agentic系统的"自我训练数据工厂",通过AI生成高质量合成数据,实现系统的持续自进化。

数据生成流水线:

from typing import List, Dict, Any, Callable

from dataclasses import dataclass

import random

@dataclass

class SyntheticDataSample:

"""合成数据样本"""

input_data: Any

expected_output: Any

metadata: Dict[str, Any]

quality_score: float

generation_method: str

class SyntheticDataGenerator:

"""

合成数据生成器

支持多种生成策略:模板填充、LLM生成、对抗生成、变异生成

"""

def __init__(self, llm_client):

self.llm = llm_client

self.generation_strategies: Dict[str, Callable] = {

"template": self._template_generation,

"llm": self._llm_generation,

"variation": self._variation_generation,

"adversarial": self._adversarial_generation

}

def generate(

self,

task_type: str,

num_samples: int,

strategies: List[str] = None,

seed_data: List[Dict] = None

) -> List[SyntheticDataSample]:

"""

生成合成数据

Args:

task_type: 任务类型

num_samples: 样本数量

strategies: 生成策略列表

seed_data: 种子数据

Returns:

合成数据样本列表

"""

if strategies is None:

strategies = ["template", "llm"]

samples = []

samples_per_strategy = num_samples // len(strategies)

for strategy in strategies:

if strategy in self.generation_strategies:

strategy_samples = self.generation_strategies[strategy](

task_type=task_type,

num_samples=samples_per_strategy,

seed_data=seed_data

)

samples.extend(strategy_samples)

# 质量过滤

filtered_samples = [s for s in samples if s.quality_score > 0.7]

return filtered_samples[:num_samples]

def _template_generation(

self,

task_type: str,

num_samples: int,

seed_data: List[Dict]

) -> List[SyntheticDataSample]:

"""基于模板的生成"""

templates = self._get_templates(task_type)

samples = []

for _ in range(num_samples):

template = random.choice(templates)

filled = self._fill_template(template)

sample = SyntheticDataSample(

input_data=filled["input"],

expected_output=filled["output"],

metadata={"template_id": template["id"]},

quality_score=0.8, # 模板填充质量较高

generation_method="template"

)

samples.append(sample)

return samples

def _llm_generation(

self,

task_type: str,

num_samples: int,

seed_data: List[Dict]

) -> List[SyntheticDataSample]:

"""基于LLM的生成"""

samples = []

generation_prompt = f"""

Generate {num_samples} synthetic training samples for task type: {task_type}.

Format each sample as:

Input: [input data]

Output: [expected output]

Ensure diversity and coverage of edge cases.

"""

response = self.llm.chat.completions.create(

model="gpt-5.4-mini",

messages=[{"role": "user", "content": generation_prompt}]

)

# 解析生成的样本

generated_text = response.choices[0].message.content

parsed_samples = self._parse_generated_samples(generated_text)

for parsed in parsed_samples:

sample = SyntheticDataSample(

input_data=parsed["input"],

expected_output=parsed["output"],

metadata={},

quality_score=self._evaluate_quality(parsed),

generation_method="llm"

)

samples.append(sample)

return samples

def _variation_generation(

self,

task_type: str,

num_samples: int,

seed_data: List[Dict]

) -> List[SyntheticDataSample]:

"""基于变异的生成"""

if not seed_data:

return []

samples = []

for _ in range(num_samples):

seed = random.choice(seed_data)

variation_prompt = f"""

Create a variation of the following sample:

Original Input: {seed['input']}

Original Output: {seed['output']}

Generate a similar but different sample with the same underlying pattern.

"""

response = self.llm.chat.completions.create(

model="gpt-5.4-mini",

messages=[{"role": "user", "content": variation_prompt}]

)

varied = self._parse_variation(response.choices[0].message.content)

sample = SyntheticDataSample(

input_data=varied["input"],

expected_output=varied["output"],

metadata={"seed_id": seed.get("id")},

quality_score=0.75,

generation_method="variation"

)

samples.append(sample)

return samples

def _adversarial_generation(

self,

task_type: str,

num_samples: int,

seed_data: List[Dict]

) -> List[SyntheticDataSample]:

"""对抗性样本生成"""

samples = []

adversarial_prompt = f"""

Generate adversarial test cases for task type: {task_type}.

These should be:

1. Semantically valid but potentially confusing

2. Edge cases that might break the system

3. Ambiguous inputs requiring careful handling

Format: Input | Expected Behavior

"""

response = self.llm.chat.completions.create(

model="claude-opus-4.6",

messages=[{"role": "user", "content": adversarial_prompt}]

)

# 解析对抗样本

adversarial_samples = self._parse_adversarial(response.choices[0].message.content)

for adv in adversarial_samples[:num_samples]:

sample = SyntheticDataSample(

input_data=adv["input"],

expected_output=adv["expected_behavior"],

metadata={"type": "adversarial"},

quality_score=0.9, # 对抗样本通常质量较高

generation_method="adversarial"

)

samples.append(sample)

return samples

def _get_templates(self, task_type: str) -> List[Dict]:

"""获取任务模板"""

# 模板库

templates = {

"qa": [

{"id": "qa_1", "input_template": "What is {topic}?", "output_template": "{topic} is defined as..."},

{"id": "qa_2", "input_template": "Explain {concept} in simple terms", "output_template": "{concept} can be understood as..."}

],

"code": [

{"id": "code_1", "input_template": "Write a function to {operation}", "output_template": "def {function_name}():..."}

]

}

return templates.get(task_type, [])

def _fill_template(self, template: Dict) -> Dict:

"""填充模板"""

# 简化实现

return {

"input": template.get("input_template", "").format(topic="AI"),

"output": template.get("output_template", "").format(topic="AI")

}

def _parse_generated_samples(self, text: str) -> List[Dict]:

"""解析生成的样本"""

# 简化实现

return [{"input": text[:100], "output": text[100:200]}]

def _parse_variation(self, text: str) -> Dict:

"""解析变异样本"""

return {"input": text, "output": "varied output"}

def _parse_adversarial(self, text: str) -> List[Dict]:

"""解析对抗样本"""

return [{"input": text[:50], "expected_behavior": "handle carefully"}]

def _evaluate_quality(self, sample: Dict) -> float:

"""评估样本质量"""

# 基于启发式规则的质量评估

score = 0.5

if len(sample.get("input", "")) > 10:

score += 0.2

if len(sample.get("output", "")) > 20:

score += 0.2

return min(score, 1.0)

# 使用示例

generator = SyntheticDataGenerator(llm_client=openai.OpenAI())

samples = generator.generate(

task_type="qa",

num_samples=100,

strategies=["template", "llm", "variation"]

)

print(f"Generated {len(samples)} synthetic samples")四、转型路径与实施指南

4.1 个人转型3步法(0-1周落地)

Step 1: 环境搭建与基础认知(Day 1-2)

任务清单:

- 安装LangGraph/LlamaIndex开发环境

- 完成官方Quickstart教程

- 阅读3个开源Agent项目源码

- 理解StateGraph基本概念

推荐资源:

资源类型 | 名称 | 预计耗时 | 优先级 |

|---|---|---|---|

文档 | LangGraph Quickstart | 2小时 | ★★★★★ |

视频 | “Building Agentic AI” by LangChain | 3小时 | ★★★★☆ |

代码 | langgraph-example-repo | 4小时 | ★★★★★ |

论文 | “ReAct: Synergizing Reasoning and Acting” | 2小时 | ★★★☆☆ |

Step 2: 第一个Agent开发(Day 3-5)

实战项目:开发一个"智能研究助手Agent"

功能需求:

- 接收研究主题

- 自动搜索相关资料

- 提取关键信息

- 生成结构化报告

- 支持多轮交互优化

代码框架:

from langgraph.graph import StateGraph, END

from typing import TypedDict, Annotated, Sequence

import operator

class ResearchState(TypedDict):

topic: str

search_results: list

extracted_info: dict

report: str

iteration: int

class ResearchAssistant:

def __init__(self):

self.workflow = self._build_workflow()

def _build_workflow(self):

workflow = StateGraph(ResearchState)

# 添加节点

workflow.add_node("search", self._search_node)

workflow.add_node("extract", self._extract_node)

workflow.add_node("generate", self._generate_node)

workflow.add_node("review", self._review_node)

# 设置边

workflow.set_entry_point("search")

workflow.add_edge("search", "extract")

workflow.add_edge("extract", "generate")

workflow.add_edge("generate", "review")

workflow.add_conditional_edges(

"review",

self._should_continue,

{"continue": "search", "end": END}

)

return workflow.compile()

def run(self, topic: str):

return self.workflow.invoke({

"topic": topic,

"search_results": [],

"extracted_info": {},

"report": "",

"iteration": 0

})

def _search_node(self, state: ResearchState):

# 实现搜索逻辑

pass

def _extract_node(self, state: ResearchState):

# 实现信息提取逻辑

pass

def _generate_node(self, state: ResearchState):

# 实现报告生成逻辑

pass

def _review_node(self, state: ResearchState):

# 实现报告审核逻辑

pass

def _should_continue(self, state: ResearchState):

if state["iteration"] < 3:

return "continue"

return "end"

# 运行

assistant = ResearchAssistant()

result = assistant.run("Agentic AI trends 2026")Step 3: 优化与部署(Day 6-7)

优化清单:

- 添加错误处理和重试机制

- 实现成本监控

- 添加日志和可观测性

- 部署到本地或云平台

- 撰写项目文档

4.2 团队转型3步法(30天完成)

Phase 1: 技术调研与架构设计(Week 1)

交付物:

- 技术选型报告

- 系统架构设计文档

- 开发环境配置指南

- 团队培训计划

关键决策:

Phase 2: MVP开发与内部验证(Week 2-3)

里程碑:

- Day 8-10: 核心Workflow实现

- Day 11-13: 工具集成与Memory系统

- Day 14-16: 模型Router与成本优化

- Day 17-18: 内部测试与Bug修复

- Day 19-20: 文档完善与知识转移

Phase 3: 生产部署与持续优化(Week 4)

部署清单:

- 生产环境配置

- 监控告警系统

- 灰度发布策略

- 回滚机制

- 性能基准测试

五、转型误区避坑指南

5.1 Claude 4.6 vs Grok 4选型陷阱

常见误区:

误区 | 错误认知 | 正确理解 |

|---|---|---|

参数迷信 | 认为参数越多能力越强 | 架构设计(MoE)比参数更重要 |

单一选型 | 全用Claude或全用Grok | 混合使用发挥各自优势 |

忽视成本 | 只看能力不看成本 | TCO(总拥有成本)才是关键 |

忽略生态 | 只看模型不看工具链 | 生态完善度影响开发效率 |

选型决策矩阵:

场景 | 推荐选择 | 理由 |

|---|---|---|

长文档分析 | Claude Opus 4.6 | 200K上下文,分析能力强 |

代码生成 | Grok 4 MoE | 代码理解能力突出 |

多模态处理 | GPT-5.4 | 原生多模态支持 |

成本敏感 | Llama 4 + Router | 开源+路由降低成本 |

实时交互 | GPT-5.4-mini | 低延迟,成本可控 |

5.2 技术债务规避

高风险技术债务:

- Prompt模板散落各处

- 风险:难以维护,版本混乱

- 规避:统一Prompt管理库,版本控制

- 硬编码模型选择

- 风险:无法动态优化成本

- 规避:使用Smart Router抽象

- 缺乏错误处理

- 风险:系统脆弱,容易崩溃

- 规避:每个节点都要有降级策略

- 无成本监控

- 风险:成本失控

- 规避:实时成本追踪与告警

5.3 成本控制红线

成本预算公式:

成本控制策略:

- 分层路由:简单任务用小模型,复杂任务用大模型

- 结果缓存:相同输入直接返回缓存结果

- 批量处理:合并请求减少API调用次数

- 异步处理:非实时任务使用批处理降低单价

六、总结与行动清单

6.1 核心观点回顾

- 范式转移不可逆转:从模型中心到Agentic系统中心的转移是2026年AI行业的主旋律

- 技术栈复杂度提升:五大核心组件(Workflow、Memory、GraphRAG、Router、Synthetic Data)构成完整技术栈

- 转型路径清晰:个人0-1周、团队30天的转型路径已被验证可行

- 避坑指南关键:选型陷阱、技术债务、成本控制是转型成功的三大保障

6.2 技术栈选型速查表

组件 | 初创团队 | 中型团队 | 企业团队 |

|---|---|---|---|

Workflow | Dify | LangGraph | LangGraph + 自研 |

Memory | In-Memory | Redis + Vector DB | 分布式Memory集群 |

GraphRAG | 轻量级实现 | Neo4j + Vector | 企业级Graph DB |

Router | 规则路由 | Smart Router | 自适应Router |

Synthetic Data | 模板生成 | LLM生成 | 多策略混合 |

6.3 立即行动清单

今日完成:

- 阅读LangGraph官方文档

- 画出你理解的Agentic系统架构图

- 列出当前项目的5个模型中心思维痛点

本周完成:

- 搭建开发环境

- 完成第一个Agent原型

- 与团队分享技术栈全景图

本月完成:

- 完成技术选型报告

- 启动MVP开发

- 建立成本监控体系

参考链接:

- 主要来源:LangGraph Documentation - Agent框架官方文档

- 辅助:LlamaIndex Workflows - Workflow编排指南

- 辅助:GraphRAG Paper - GraphRAG技术论文

- 辅助:MCP Protocol - Model Context Protocol规范

附录(Appendix):

A. 技术栈对比详细表格

维度 | LangGraph | LlamaIndex | CrewAI | Dify |

|---|---|---|---|---|

学习曲线 | 陡峭 | 中等 | 平缓 | 平缓 |

灵活性 | ★★★★★ | ★★★★☆ | ★★★☆☆ | ★★★☆☆ |

社区支持 | ★★★★★ | ★★★★★ | ★★★☆☆ | ★★★★☆ |

企业就绪 | ★★★★★ | ★★★★★ | ★★★☆☆ | ★★★★☆ |

可视化 | ★★★☆☆ | ★★★☆☆ | ★★★★☆ | ★★★★★ |

B. 成本估算模型

假设日均10,000次调用,平均每次2K tokens:

方案 | 日均成本 | 月均成本 | 年成本 |

|---|---|---|---|

全GPT-5.4 | $100 | $3,000 | $36,000 |

Smart Router | $35 | $1,050 | $12,600 |

开源+API混合 | $20 | $600 | $7,200 |

C. 团队技能矩阵

转型所需技能评估:

技能 | 初级 | 中级 | 高级 | 团队配置建议 |

|---|---|---|---|---|

Python | 必需 | 必需 | 必需 | 全员 |

LangGraph | 建议 | 必需 | 建议 | 2-3人 |

Vector DB | 可选 | 建议 | 建议 | 1-2人 |

Graph DB | 可选 | 可选 | 建议 | 1人 |

DevOps | 可选 | 建议 | 必需 | 1-2人 |

关键词: Agentic系统, 技术栈转型, LangGraph, Multimodal Memory, GraphRAG, Smart Router, Synthetic Data, 模型中心, 系统中心, 2026趋势, 安全风信子, 技术深度

在这里插入图片描述

请添加图片描述

本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2026-04-01,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录