如何用linspace调用TensorFlow模型?

如何用linspace调用TensorFlow模型?

提问于 2022-03-31 12:28:58

我试图在一个行空间上调用一个TensorFlow模型,但我似乎连一个非常简单的示例(基于https://www.tensorflow.org/api_docs/python/tf/keras/Model)都无法工作:

import tensorflow as tf

class FeedForward(tf.keras.Model):

def __init__(self):

super().__init__()

self.dense1 = tf.keras.layers.Dense(4, activation=tf.nn.relu, name='lyr1')

self.dense2 = tf.keras.layers.Dense(5, activation=tf.nn.softmax, name='lyr2')

def call(self, inputs, training=False):

x = self.dense1(inputs) # <--- error here

return self.dense2(x)

model = FeedForward()

batchSize = 2048

preX = tf.linspace(0.0, 10.0, batchSize)

model(preX, training=True)我得到以下错误(在上面所示的行上):

ValueError: Exception encountered when calling layer "feed_forward_87" (type FeedForward).

Input 0 of layer "lyr1" is incompatible with the layer: expected min_ndim=2, found ndim=1. Full shape received: (2048,)

Call arguments received:

• inputs=tf.Tensor(shape=(2048,), dtype=float32)

• training=True我尝试过添加一个输入层

import tensorflow as tf

class FeedForward(tf.keras.Model):

def __init__(self):

super().__init__()

self.inLyr = tf.keras.layers.Input(shape=(2048,), name='inp')

self.dense1 = tf.keras.layers.Dense(4, activation=tf.nn.relu, name='lyr1')

self.dense2 = tf.keras.layers.Dense(5, activation=tf.nn.softmax, name='lyr2')

def call(self, inputs, training=False):

x = self.inLyr(inputs) # <---- error here

x = self.dense1(x)

return self.dense2(x)

model = FeedForward()

batchSize = 2048

preX = tf.linspace(0.0, 10.0, batchSize)

model(preX, training=True)但之后错误变成了

TypeError: Exception encountered when calling layer "feed_forward_88" (type FeedForward).

'KerasTensor' object is not callable

Call arguments received:

• inputs=tf.Tensor(shape=(2048,), dtype=float32)

• training=True任何帮助都是非常感谢的。

回答 2

Stack Overflow用户

回答已采纳

发布于 2022-03-31 12:31:04

您不需要显式的Input层。你只是错过了一个维度。尝试使用tf.expand_dims获取所需的输入形状(batch_size, features)

import tensorflow as tf

class FeedForward(tf.keras.Model):

def __init__(self):

super().__init__()

self.dense1 = tf.keras.layers.Dense(4, activation=tf.nn.relu, name='lyr1')

self.dense2 = tf.keras.layers.Dense(5, activation=tf.nn.softmax, name='lyr2')

def call(self, inputs, training=False):

x = self.dense1(inputs)

return self.dense2(x)

model = FeedForward()

batchSize = 2048

preX = tf.expand_dims(tf.linspace(0.0, 10.0, batchSize), axis=-1)

model(preX, training=True)<tf.Tensor: shape=(2048, 5), dtype=float32, numpy=

array([[2.0000000e-01, 2.0000000e-01, 2.0000000e-01, 2.0000000e-01,

2.0000000e-01],

[2.0061693e-01, 1.9939975e-01, 1.9954492e-01, 2.0115443e-01,

1.9928394e-01],

[2.0123295e-01, 1.9879854e-01, 1.9908811e-01, 2.0231272e-01,

1.9856769e-01],

...,

[4.1859145e-03, 1.6480753e-08, 7.3006660e-08, 9.9581391e-01,

5.0233933e-09],

[4.1747764e-03, 1.6337188e-08, 7.2423312e-08, 9.9582505e-01,

4.9767293e-09],

[4.1636685e-03, 1.6194873e-08, 7.1844603e-08, 9.9583614e-01,

4.9305160e-09]], dtype=float32)>或者使用Input层:

class FeedForward(tf.keras.Model):

def __init__(self):

super().__init__()

inputs = tf.keras.layers.Input((1,))

dense1 = tf.keras.layers.Dense(4, activation=tf.nn.relu, name='lyr1')(inputs)

dense2 = tf.keras.layers.Dense(5, activation=tf.nn.softmax, name='lyr2')(dense1)

self.model = tf.keras.Model(inputs, dense2)

def call(self, inputs, training=False):

return self.model(inputs)Stack Overflow用户

发布于 2022-03-31 13:44:47

我也从你的回复中学习,发现他们的Fn可能需要像desns_1和dense_2那样工作,然后我把它改造成序列的模型。

对于错误,这是要修正的!

preX = tf.linspace([0.0], [10.0], batchSize, axis=0)样本

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Varibles

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

batchSize = 2048

preX = tf.linspace([0.0], [10.0], batchSize, axis=0)

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Class

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

class FeedForward(tf.keras.Model):

def __init__(self):

super().__init__()

self.input1 = tf.keras.layers.InputLayer(input_shape=(2048, 1))

self.dense1 = tf.keras.layers.Dense(4, activation=tf.nn.relu, name='lyr1')

self.dense2 = tf.keras.layers.Dense(5, activation=tf.nn.softmax, name='lyr2')

self.Q_Model = tf.keras.models.Sequential([ ])

self.Q_Model.add(self.input1)

self.Q_Model.add(self.dense1)

self.Q_Model.add(self.dense2)

self.optimizer = tf.keras.optimizers.Nadam(

learning_rate=0.0001, beta_1=0.9, beta_2=0.999, epsilon=1e-07,

name='Nadam'

)

self.lossfn = tf.keras.losses.MeanSquaredLogarithmicError(reduction=tf.keras.losses.Reduction.AUTO, name='mean_squared_logarithmic_error')

self.Q_Model.compile(optimizer=self.optimizer, loss=self.lossfn, metrics=['accuracy'])

def call(self, inputs, training=False):

inputs = tf.constant( inputs, dtype=tf.float32, shape=(1, 2048, 1) )

dataset = tf.data.Dataset.from_tensor_slices(([inputs], [inputs]))

history = self.Q_Model.fit(dataset, epochs=10)

return history

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Running

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

model = FeedForward()

history = model(preX, training=True)

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Summary

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

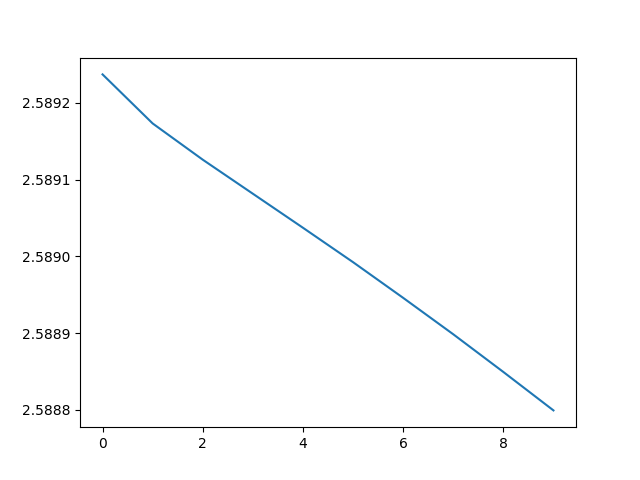

plt.plot(history.history['loss'])

plt.show()

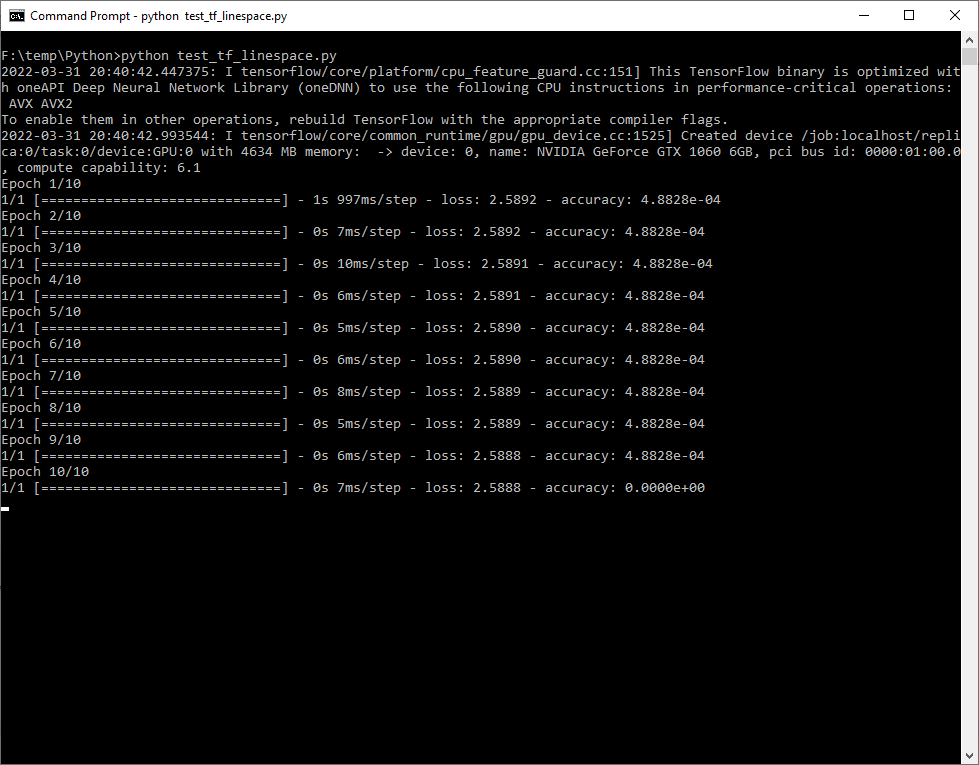

plt.close()输出

Epoch 1/10

1/1 [==============================] - 1s 997ms/step - loss: 2.5892 - accuracy: 4.8828e-04

Epoch 2/10

1/1 [==============================] - 0s 7ms/step - loss: 2.5892 - accuracy: 4.8828e-04

Epoch 3/10

1/1 [==============================] - 0s 10ms/step - loss: 2.5891 - accuracy: 4.8828e-04

Epoch 4/10

1/1 [==============================] - 0s 6ms/step - loss: 2.5891 - accuracy: 4.8828e-04

Epoch 5/10

1/1 [==============================] - 0s 5ms/step - loss: 2.5890 - accuracy: 4.8828e-04

Epoch 6/10

1/1 [==============================] - 0s 6ms/step - loss: 2.5890 - accuracy: 4.8828e-04

Epoch 7/10

1/1 [==============================] - 0s 8ms/step - loss: 2.5889 - accuracy: 4.8828e-04

Epoch 8/10

1/1 [==============================] - 0s 5ms/step - loss: 2.5889 - accuracy: 4.8828e-04

Epoch 9/10

1/1 [==============================] - 0s 6ms/step - loss: 2.5888 - accuracy: 4.8828e-04

Epoch 10/10

1/1 [==============================] - 0s 7ms/step - loss: 2.5888 - accuracy: 0.0000e+00

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/71692505

复制相关文章

相似问题