MySQL 主从复制全解:底层原理、复制模式差异、主从延迟排查与优化实战

MySQL 主从复制全解:底层原理、复制模式差异、主从延迟排查与优化实战

果酱带你啃java

发布于 2026-04-14 15:30:09

发布于 2026-04-14 15:30:09

一、主从复制核心价值与基础认知

MySQL主从复制是构建高可用、高性能MySQL架构的核心基石,其本质是通过日志同步实现多节点的数据一致性,核心应用场景覆盖四大方向:

- 高可用容灾:主库故障时可快速切换至从库,实现业务无感知恢复,避免单点故障导致的服务不可用

- 读写分离水平扩展:写请求集中在主库,读请求分散到多个从库,突破单节点的IO与CPU性能瓶颈

- 数据备份与离线分析:从库执行数据备份、报表统计、离线分析等操作,不占用主库资源,避免影响线上业务

- 异地多活架构:跨地域部署从库,实现就近访问与地域级容灾,降低跨地域访问延迟

核心基础组件认知

在深入原理前,必须先厘清两个极易混淆的核心日志,这是理解主从复制的前提:

日志类型 | 所属层级 | 日志性质 | 核心作用 | 生命周期 |

|---|---|---|---|---|

binlog(二进制日志) | MySQL Server层 | 逻辑日志 | 记录所有数据修改类操作,是主从复制的数据载体 | 循环写入,可通过配置保留时长 |

redo log(重做日志) | InnoDB引擎层 | 物理日志 | 实现事务的持久性,崩溃恢复时保障已提交事务不丢失 | 固定大小,循环覆盖写入 |

主从复制的核心数据载体是binlog,与存储引擎无关,无论使用InnoDB还是MyISAM引擎,都可以通过binlog实现主从复制。

binlog支持三种格式,MySQL 8.0默认使用ROW格式:

- STATEMENT:基于SQL语句的复制,记录执行的SQL语句。优点是日志体积小,缺点是存在函数、触发器等场景下的主从数据不一致风险,已基本淘汰

- ROW:基于行的复制,记录行数据的修改前后状态。优点是主从一致性极强,无SQL执行上下文导致的不一致问题,缺点是日志体积相对更大

- MIXED:混合模式,自动在STATEMENT和ROW之间切换,兼顾体积与一致性,仅在特定兼容场景使用

二、主从复制全链路底层原理

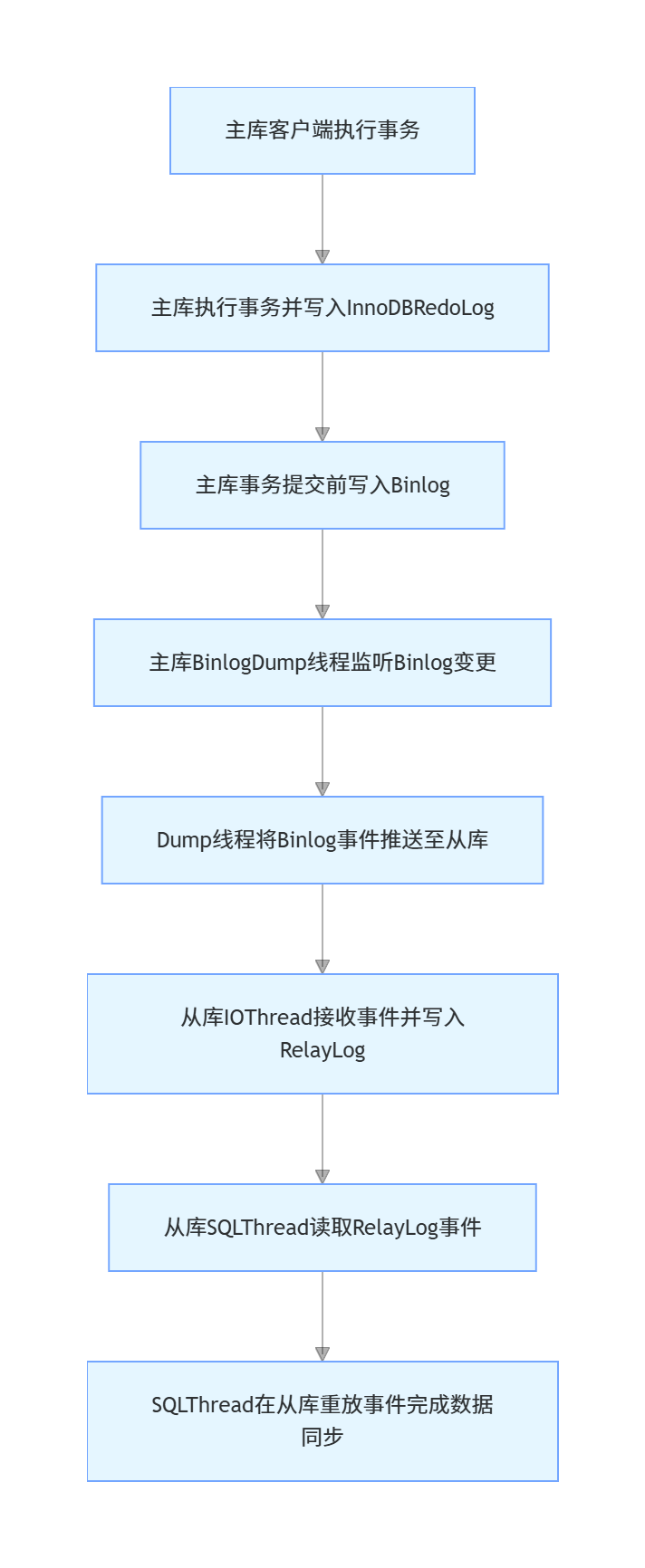

MySQL主从复制的核心是单主多从的流式日志同步架构,全链路由3个核心线程协同完成,分别是主库的Binlog Dump线程、从库的IO Thread与SQL Thread。

全链路同步流程

主从复制的完整数据流转链路共8个核心步骤,覆盖从主库事务提交到从库数据同步完成的全流程:

步骤拆解与底层细节

- 主库事务执行与日志写入:客户端在主库执行事务时,InnoDB先将修改写入redo log(prepare状态),事务提交前必须将事务对应的所有事件写入binlog,完成binlog刷盘后,才会将redo log标记为commit状态,最终向客户端返回事务提交成功。这就是MySQL的“两阶段提交”,保障binlog与redo log的事务一致性。

- Binlog Dump线程监听与推送:主库的Binlog Dump线程会持续监听binlog的写入事件,当有新的事务提交生成binlog时,Dump线程会读取binlog事件,主动推送给已建立连接的从库IO线程。若从库同步的binlog位置已被主库清理,会直接抛出同步错误。

- 从库IO线程接收与写入中继日志:从库IO线程与主库建立TCP长连接,接收Dump线程推送的binlog事件,将其原样写入本地的中继日志(Relay Log)。Relay Log的结构与binlog完全一致,仅作为从库的临时日志中转载体,重放完成后会被自动清理。

- 从库SQL线程重放日志:SQL线程持续监听Relay Log的新增事件,读取事件后在从库本地原样执行,完成数据的重放,最终实现主从库的数据一致。

GTID全局事务标识

MySQL 5.6引入的GTID(Global Transaction Identifier)是主从复制的核心优化,彻底解决了传统基于文件+位置复制的痛点,是当前生产环境的标准配置。

- GTID格式:

source_id:transaction_id,其中source_id是MySQL实例的server_uuid(全局唯一),transaction_id是该实例上递增的事务编号 - 核心优势:主库的每个事务都对应唯一的GTID,从库会记录已重放的所有GTID,故障转移时无需手动查找binlog文件和位置,可自动定位缺失的事务,极大降低了主从切换的复杂度与出错概率

- 核心特性:GTID保证一个事务在指定集群内只会被执行一次,避免重复执行导致的主从数据不一致

三、三大复制模式深度对比与配置实战

MySQL提供了三种核心复制模式,分别是异步复制、半同步复制、组复制(MGR),三者的核心差异在于数据一致性保障级别、事务提交逻辑、性能损耗,适用于不同的业务场景。

3.1 异步复制(Asynchronous Replication)

异步复制是MySQL的默认复制模式,也是最基础的复制架构。

核心原理

主库执行完客户端事务,完成binlog刷盘后,会立即向客户端返回事务提交成功,无需等待从库的IO线程接收binlog并返回ACK确认。主库与从库的同步完全异步,主库不感知从库的同步状态。

核心特性

- 优点:性能损耗极低,对主库的响应时间几乎无影响,架构简单,运维成本低

- 缺点:数据一致性保障最弱,主库宕机时,已提交的事务可能还未同步到从库,切换后会出现数据丢失;极端场景下会出现主从数据不一致

- 适用场景:非核心业务、日志存储、读多写少且对数据丢失容忍度较高的场景

核心配置(MySQL 8.0)

主库my.cnf核心配置

[mysqld]

server-id=1

log-bin=mysql-bin

binlog_format=ROW

gtid_mode=ON

enforce_gtid_consistency=ON

binlog_expire_logs_seconds=604800

从库my.cnf核心配置

[mysqld]

server-id=2

relay_log=relay-bin

read_only=ON

super_read_only=ON

gtid_mode=ON

enforce_gtid_consistency=ON

log_slave_updates=OFF

从库建立复制链路SQL

CHANGE MASTER TO

MASTER_HOST='192.168.1.100',

MASTER_PORT=3306,

MASTER_USER='repl',

MASTER_PASSWORD='Repl@123456',

MASTER_AUTO_POSITION=1;

START SLAVE;

3.2 半同步复制(Semisynchronous Replication)

半同步复制是MySQL在异步复制基础上优化的强一致复制模式,MySQL 5.5引入,5.7升级为无损半同步复制,MySQL 8.0默认开启无损模式。

核心原理

主库事务提交时,完成binlog刷盘后,不会立即向客户端返回成功,而是等待至少N个从库的IO线程接收binlog、写入Relay Log并返回ACK确认后,才会向客户端返回事务提交成功。

无损半同步与传统半同步的核心差异在于等待ACK的时机:

- 无损半同步(

AFTER_SYNC,默认):在引擎层事务提交前等待从库ACK,保障主库宕机时,已返回客户端成功的事务一定已同步到至少一个从库,无数据丢失风险 - 传统半同步(

AFTER_COMMIT):在引擎层事务提交后等待从库ACK,若主库在提交后、收到ACK前宕机,会出现已提交事务未同步到从库的情况,存在数据丢失风险

核心特性

- 优点:数据一致性保障极强,彻底解决了异步复制的主库宕机数据丢失问题;性能损耗可控,仅增加了网络往返的RTT耗时

- 缺点:网络抖动或从库故障时,主库会触发超时降级为异步复制,等待超时时间内会阻塞事务提交;架构仍存在脑裂风险

- 适用场景:核心交易系统、金融支付、订单系统等对数据一致性要求高的业务场景

核心配置(MySQL 8.0)

主库安装插件与配置

INSTALL PLUGIN rpl_semi_sync_master SONAME 'semisync_master.so';

SET GLOBAL rpl_semi_sync_master_enabled = 1;

SET GLOBAL rpl_semi_sync_master_wait_for_slave_count = 1;

SET GLOBAL rpl_semi_sync_master_wait_point = 'AFTER_SYNC';

SET GLOBAL rpl_semi_sync_master_timeout = 1000;

从库安装插件与配置

INSTALL PLUGIN rpl_semi_sync_slave SONAME 'semisync_slave.so';

SET GLOBAL rpl_semi_sync_slave_enabled = 1;

STOP SLAVE IO_THREAD;

START SLAVE IO_THREAD;

核心参数说明:

rpl_semi_sync_master_wait_for_slave_count:等待ACK的从库数量,生产环境建议设置为1rpl_semi_sync_master_timeout:超时时间,单位毫秒,超时后自动降级为异步复制,避免阻塞主库业务

3.3 组复制(MGR, Group Replication)

组复制是MySQL 5.7.17引入的分布式强一致复制方案,基于Paxos分布式共识协议实现,彻底解决了传统主从复制的脑裂、数据不一致问题。

核心原理

组复制由多个MySQL节点组成复制组,组内所有节点通过Paxos协议达成数据共识,事务必须经过组内多数节点(N/2+1) 确认后才能提交。支持单主模式(仅一个节点可写,其他节点只读)和多主模式(所有节点均可写),自带故障检测、自动选主、脑裂防护、数据一致性校验能力。

核心特性

- 优点:数据一致性级别最高,分布式共识协议保障事务不丢失;自带自动故障转移,无需人工介入;原生支持脑裂防护,杜绝传统主从的脑裂问题;支持多主写入,满足分布式架构需求

- 缺点:性能损耗高于半同步复制,对网络延迟要求极高,跨地域部署性能下降明显;架构复杂,运维成本高;对表结构有强制要求(必须有主键)

- 适用场景:金融级核心系统、分布式强一致需求、跨机房高可用架构、对RTO/RPO要求极高的业务

三大复制模式核心差异对比

对比维度 | 异步复制 | 无损半同步复制 | 组复制(MGR) |

|---|---|---|---|

数据一致性保障 | 弱,主库宕机易丢失数据 | 强,已提交事务不丢失 | 极强,分布式共识保障 |

事务提交逻辑 | 主库刷盘后立即返回 | 等待至少1个从库ACK后返回 | 等待多数节点共识确认后返回 |

性能损耗 | 极低 | 低,仅增加网络RTT | 中高,共识协议带来额外开销 |

故障转移 | 人工介入,复杂度高 | 需第三方组件(MGR/Keepalived) | 原生自动完成,无需人工介入 |

脑裂防护 | 无,需人工规避 | 无,需第三方组件保障 | 原生支持,彻底杜绝脑裂 |

多主写入 | 不支持 | 不支持 | 原生支持 |

运维成本 | 极低 | 低 | 中高 |

四、主从延迟根因全链路排查体系

主从延迟是生产环境最常见的问题,指从库重放数据的速度跟不上主库写入速度,导致从库数据落后于主库,影响读写分离的一致性与故障切换的RTO。

4.1 延迟的准确监控与定位

很多开发者仅依赖Seconds_Behind_Master判断延迟,这个值存在明显的局限性,仅能作为参考,不能作为唯一判断标准。

延迟监控核心方法

- 基础状态查看

SHOW SLAVE STATUS\G

核心关键字段解读:

Slave_IO_Running/Slave_SQL_Running:两个线程必须均为Yes,否则复制已中断Master_Log_File/Read_Master_Log_Pos:从库IO线程已读取到的主库binlog位置Relay_Master_Log_File/Exec_Master_Log_Pos:从库SQL线程已重放完成的主库binlog位置Seconds_Behind_Master:从库重放的事务与主库最新事务的时间差,单位秒,长事务、系统时间不一致、复制中断时会出现误判

- 延迟环节精准定位

- 若

Read_Master_Log_Pos与主库当前binlog位置差距大:延迟发生在IO线程,主从之间的日志传输环节出现瓶颈 - 若

Read_Master_Log_Pos与主库一致,Exec_Master_Log_Pos与Read_Master_Log_Pos差距大:延迟发生在SQL线程,从库日志重放环节出现瓶颈,90%的主从延迟都属于此类

- 若

- 精准延迟监控(GTID模式)

-- 主库执行,查看当前最新GTID

SELECT @@global.gtid_executed;

-- 从库执行,查看已重放的GTID

SELECT @@global.gtid_executed;

对比两个结果的GTID集合差值,可精准判断延迟的事务数量,无Seconds_Behind_Master的误判问题。

- 并行复制延迟明细查看(MySQL 8.0)

SELECT * FROM performance_schema.replication_applier_status_by_worker;

可查看每个并行复制worker线程的执行状态、延迟事务数,精准定位哪个worker线程出现了重放瓶颈。

4.2 全链路根因排查流程

第一步:IO线程延迟根因排查

IO线程延迟的核心是主库binlog无法快速传输到从库,核心排查方向:

- 网络层面排查

- 查看主从之间的网络带宽,是否存在带宽打满的情况,跨机房部署需重点关注

- 测试主从之间的网络RTT,正常内网环境应小于1ms,RTT过高会直接导致半同步复制性能下降、延迟增加

- 排查防火墙、安全组是否存在限流、丢包,TCP参数是否优化

- 主库层面排查

- 查看主库binlog刷盘策略,

sync_binlog=1会保障binlog持久化,但会增加刷盘IO开销,非核心业务可适当调整 - 查看主库binlog是否开启压缩,未开启会导致binlog体积过大,传输耗时增加

- 排查主库Dump线程是否异常,是否存在大量从库连接导致主库Dump线程压力过大

- 查看主库binlog刷盘策略,

- 从库层面排查

- 查看从库服务器的IO性能,是否存在Relay Log写入磁盘的IOPS瓶颈,机械硬盘极易出现此问题

- 排查从库是否开启了过多的日志写入,如binlog、慢查询日志、审计日志,导致IO资源耗尽

第二步:SQL线程延迟根因排查

SQL线程延迟是主从延迟的核心重灾区,核心是从库重放binlog的速度跟不上主库写入速度,核心排查方向按出现概率从高到低排序:

- 表无主键或唯一键这是生产环境最高频的延迟根因。ROW格式下,binlog记录的是行数据的修改,若表无主键、无唯一键,从库重放

UPDATE/DELETE语句时,无法通过索引快速定位行,只能通过全表扫描逐行匹配所有字段,表数据量越大,重放耗时越长,最终导致严重延迟。 排查SQL:

SELECT table_schema,table_name,engine FROM information_schema.tables

WHERE table_schema NOT IN ('sys','mysql','information_schema','performance_schema')

AND (engine='InnoDB' AND table_name NOT IN (SELECT table_name FROM information_schema.key_column_usage WHERE constraint_name='PRIMARY'));

- 大事务导致的延迟主库执行的大事务(如批量删除/更新百万行数据、大表DDL),会在binlog中生成大量的日志事件。主库可以并行执行多个事务,但传统单线程复制下,从库只能串行重放该大事务,重放期间无法处理后续的事务,直接导致延迟持续累积。 排查SQL:

-- 主库查看当前运行的长事务

SELECT trx_id,trx_started,trx_rows_modified,user,host,db FROM information_schema.innodb_trx

WHERE TIMESTAMPDIFF(SECOND,trx_started,NOW()) > 10;

-- 从库查看正在重放的事务

SELECT * FROM performance_schema.events_statements_current WHERE thread_id IN (SELECT thread_id FROM performance_schema.replication_applier_status_by_worker);

- 单线程复制瓶颈MySQL 5.6之前仅支持单SQL线程重放,主库的并行写入在从库只能串行执行,高并发写入场景下必然出现延迟。即使开启了并行复制,若配置不合理,也无法发挥并行效果。 排查SQL:

-- 查看从库并行复制配置

SHOW VARIABLES LIKE 'slave_parallel%';

SHOW VARIABLES LIKE 'binlog_transaction_dependency_tracking';

- 从库硬件性能不足从库的CPU、内存、IOPS性能低于主库,主库写入的事务量超过了从库的重放能力上限,必然导致延迟。常见场景:主库使用SSD,从库使用机械硬盘;主库配置32核CPU,从库仅8核。

- 从库写入与大查询压力

- 从库未开启

super_read_only=ON,人为或业务程序在从库执行了写操作,导致主从数据不一致,重放时出现行匹配失败,复制中断,延迟累积 - 从库执行了大量的慢查询、报表统计、离线分析任务,占用了大量的CPU、IO、内存资源,导致SQL线程无法获取足够的资源重放日志,出现延迟

- 从库未开启

- 大表DDL操作主库对千万行级大表执行DDL操作(如加索引、修改字段),执行耗时长达数十分钟,从库必须等主库DDL执行完成后,才能在从库串行执行相同的DDL,执行期间无法重放后续事务,直接导致延迟飙升。

五、主从延迟全维度优化方案

主从延迟的优化核心是减少主库写入的binlog体积、提升从库的重放并行度、降低重放的资源开销,需从架构、主库、从库、SQL四个维度全链路优化。

5.1 复制架构与模式优化

- 开启GTID模式与并行复制MySQL 8.0基于WRITESET的并行复制,可极大提升从库重放的并行度,是解决单线程复制瓶颈的核心方案。 核心优化配置(从库):

[mysqld]

slave_parallel_type=LOGICAL_CLOCK

slave_parallel_workers=8

binlog_transaction_dependency_tracking=WRITESET

transaction_write_set_extraction=XXHASH64

slave_preserve_commit_order=ON

配置说明:

- `slave_parallel_workers`:并行复制worker线程数,建议设置为从库CPU核心数的50%-70%,最高不超过32

- `binlog_transaction_dependency_tracking=WRITESET`:开启基于行修改的并行复制,只要事务修改的行无冲突,即可并行重放,无需同一批提交

- `slave_preserve_commit_order=ON`:保障从库事务提交顺序与主库一致,避免主从数据不一致

- 架构拆分优化

- 一主多从架构:将读请求分散到多个从库,避免单个从库的读压力过大,影响重放性能

- 级联复制:主库仅同步到一个级联主库,再由级联主库同步到多个从库,降低主库的Dump线程压力与带宽占用,适用于从库数量较多的场景

- 读写分离隔离:将报表统计、离线分析、备份任务放到单独的离线从库,与业务读从库物理隔离,避免离线任务影响业务从库的重放性能

- 复制模式选型优化

- 核心业务优先使用无损半同步复制,保障数据一致性的同时,性能可控

- 对RTO/RPO要求极高的业务,使用MGR组复制,原生支持自动故障转移,杜绝脑裂问题

5.2 主库端优化

- 强制表结构规范

- 所有InnoDB表必须有显式主键,优先使用自增主键或有序雪花ID,避免无序UUID主键导致的页分裂与性能下降

- 所有DML操作必须走索引,避免全表扫描与全表锁定,减少binlog体积与执行耗时

- 大事务拆分优化绝对禁止在主库执行大事务,所有批量操作必须拆分为小事务,单次批量操作控制在1000行以内。 优化示例:

-- 优化前:大事务,一次性删除百万行数据,导致严重主从延迟

DELETE FROM order_info WHERE create_time < '2024-01-01';

-- 优化后:拆分小事务,每次删除1000行,循环执行

WHILE ROW_COUNT() > 0 DO

DELETE FROM order_info WHERE create_time < '2024-01-01' LIMIT 1000;

SELECT SLEEP(0.1);

END WHILE;

- DDL操作优化

- 优先使用MySQL 8.0的Instant DDL,如新增非主键列、修改varchar长度等操作,可瞬间完成,无锁表,无主从延迟风险

- 大表DDL优先在业务低峰期执行,避免使用锁表的DDL操作,可使用

gh-ost/pt-online-schema-change工具实现无锁表DDL - 禁止在主库一次性执行多个大表DDL,需逐个执行,确认从库同步完成后再执行下一个

- binlog优化

- 固定使用

ROW格式的binlog,保障主从一致性 - 开启binlog事务压缩,减少binlog体积与网络传输量:

binlog_transaction_compression=ON - 合理设置binlog保留时长,避免binlog文件过多导致的磁盘IO开销

- 固定使用

5.3 从库端优化

- 硬件性能优化

- 从库的CPU、内存、IOPS性能不低于主库,绝对禁止主库使用SSD、从库使用机械硬盘

- 从库的

innodb_buffer_pool_size设置为物理内存的50%-70%,最大化缓存数据,减少磁盘IO

- 从库配置优化

[mysqld]

read_only=ON

super_read_only=ON

innodb_flush_log_at_trx_commit=2

sync_binlog=1000

innodb_flush_method=O_DIRECT

log_slave_updates=OFF

slow_query_log=OFF

配置说明:

- `super_read_only=ON`:禁止超级用户在从库执行写操作,彻底避免人为写入导致的数据不一致

- `innodb_flush_log_at_trx_commit=2`:从库无需和主库一样的双1配置,降低刷盘IO开销,提升重放性能

- `log_slave_updates=OFF`:关闭从库的binlog写入(级联复制除外),减少IO开销

- 从库资源隔离优化

- 禁止在业务从库执行大查询、报表统计、备份任务,单独搭建离线从库承载此类业务

- 对从库的查询进行限流,避免突发的大量读请求耗尽从库资源,影响SQL线程重放

5.4 SQL与业务优化

- 避免无意义的数据修改:禁止重复更新相同数据的SQL,如

UPDATE user SET name='test' WHERE id=1,即使数据无变化,也会生成binlog,增加主从同步压力 - 减少不必要的字段更新:更新语句仅修改需要变更的字段,禁止全字段更新,减少ROW格式binlog的体积

- 业务层一致性优化:读写分离场景下,核心写操作后的立即读请求,强制路由到主库,避免主从延迟导致的业务数据不一致问题

六、实战案例

6.1 无主键表导致主从延迟的复现与修复

问题复现

- 创建无主键表并插入测试数据

CREATE TABLE user_no_pk (

nameVARCHAR(50) NOTNULL,

age INTNOTNULL,

create_time DATETIME NOTNULLDEFAULTCURRENT_TIMESTAMP

) ENGINE=InnoDBDEFAULTCHARSET=utf8mb4;

-- 插入100万行测试数据

INSERTINTO user_no_pk (name,age)

WITHRECURSIVE t AS (

SELECT1AS n UNIONALLSELECT n+1FROM t WHERE n < 1000000

)

SELECTCONCAT('user',n),n%100FROM t;

- 主库执行单条更新语句

UPDATE user_no_pk SET age=20 WHERE name='user999999';

主库执行耗时0.01秒,但是从库重放该语句耗时超过10秒,直接导致主从延迟飙升。核心原因是ROW格式下,从库无主键,只能全表扫描100万行数据,逐行匹配字段定位要更新的行。

修复方案

给表添加显式主键,优化后从库重放耗时降至0.01秒,延迟彻底消除:

ALTER TABLE user_no_pk ADD COLUMN id BIGINT PRIMARY KEY AUTO_INCREMENT FIRST;

6.2 基于MyBatis-Plus的读写分离实现

项目Maven依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>3.2.5</version>

<relativePath/>

</parent>

<groupId>com.jam.demo</groupId>

<artifactId>mysql-rw-split-demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>mysql-rw-split-demo</name>

<properties>

<java.version>17</java.version>

<mybatis-plus.version>3.5.7</mybatis-plus.version>

<guava.version>33.1.0-jre</guava.version>

<fastjson2.version>2.0.49</fastjson2.version>

<springdoc.version>2.5.0</springdoc.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-jdbc</artifactId>

</dependency>

<dependency>

<groupId>com.baomidou</groupId>

<artifactId>mybatis-plus-boot-starter</artifactId>

<version>${mybatis-plus.version}</version>

</dependency>

<dependency>

<groupId>com.mysql</groupId>

<artifactId>mysql-connector-j</artifactId>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>org.springdoc</groupId>

<artifactId>springdoc-openapi-starter-webmvc-ui</artifactId>

<version>${springdoc.version}</version>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>1.18.32</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>${guava.version}</version>

</dependency>

<dependency>

<groupId>com.alibaba.fastjson2</groupId>

<artifactId>fastjson2</artifactId>

<version>${fastjson2.version}</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<configuration>

<excludes>

<exclude>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

</exclude>

</excludes>

</configuration>

</plugin>

</plugins>

</build>

</project>

数据源类型枚举

package com.jam.demo.enums;

import lombok.Getter;

@Getter

publicenum DataSourceType {

MASTER("master", "主库-写操作"),

SLAVE("slave", "从库-读操作");

privatefinal String code;

privatefinal String desc;

DataSourceType(String code, String desc) {

this.code = code;

this.desc = desc;

}

}

动态数据源上下文持有器

package com.jam.demo.config;

import com.jam.demo.enums.DataSourceType;

import org.springframework.util.ObjectUtils;

publicclass DynamicDataSourceContextHolder {

privatestaticfinal ThreadLocal<DataSourceType> CONTEXT_HOLDER = new ThreadLocal<>();

private DynamicDataSourceContextHolder() {

}

/**

* 设置当前数据源类型

* @param dataSourceType 数据源类型

*/

public static void setDataSourceType(DataSourceType dataSourceType) {

if (ObjectUtils.isEmpty(dataSourceType)) {

thrownew IllegalArgumentException("数据源类型不能为空");

}

CONTEXT_HOLDER.set(dataSourceType);

}

/**

* 获取当前数据源类型

* @return 数据源类型

*/

public static DataSourceType getDataSourceType() {

return ObjectUtils.isEmpty(CONTEXT_HOLDER.get()) ? DataSourceType.MASTER : CONTEXT_HOLDER.get();

}

/**

* 清除数据源类型

*/

public static void clearDataSourceType() {

CONTEXT_HOLDER.remove();

}

}

动态数据源实现

package com.jam.demo.config;

import org.springframework.jdbc.datasource.lookup.AbstractRoutingDataSource;

public class DynamicRoutingDataSource extends AbstractRoutingDataSource {

@Override

protected Object determineCurrentLookupKey() {

return DynamicDataSourceContextHolder.getDataSourceType().getCode();

}

}

数据源配置类

package com.jam.demo.config;

import com.alibaba.druid.pool.DruidDataSource;

import com.baomidou.mybatisplus.core.MybatisConfiguration;

import com.baomidou.mybatisplus.core.config.GlobalConfig;

import com.baomidou.mybatisplus.extension.spring.MybatisSqlSessionFactoryBean;

import com.jam.demo.enums.DataSourceType;

import org.springframework.boot.context.properties.ConfigurationProperties;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.context.annotation.Primary;

import org.springframework.core.io.support.PathMatchingResourcePatternResolver;

import org.springframework.jdbc.datasource.DataSourceTransactionManager;

import org.springframework.transaction.PlatformTransactionManager;

import org.springframework.transaction.support.TransactionTemplate;

import javax.sql.DataSource;

import java.util.HashMap;

import java.util.Map;

@Configuration

publicclass DataSourceConfig {

@Bean

@ConfigurationProperties(prefix = "spring.datasource.master")

public DataSource masterDataSource() {

returnnew DruidDataSource();

}

@Bean

@ConfigurationProperties(prefix = "spring.datasource.slave")

public DataSource slaveDataSource() {

returnnew DruidDataSource();

}

@Bean

@Primary

public DataSource dynamicDataSource() {

Map<Object, Object> targetDataSources = new HashMap<>(2);

targetDataSources.put(DataSourceType.MASTER.getCode(), masterDataSource());

targetDataSources.put(DataSourceType.SLAVE.getCode(), slaveDataSource());

DynamicRoutingDataSource dynamicDataSource = new DynamicRoutingDataSource();

dynamicDataSource.setTargetDataSources(targetDataSources);

dynamicDataSource.setDefaultTargetDataSource(masterDataSource());

return dynamicDataSource;

}

@Bean

public MybatisSqlSessionFactoryBean sqlSessionFactory() throws Exception {

MybatisSqlSessionFactoryBean sessionFactory = new MybatisSqlSessionFactoryBean();

sessionFactory.setDataSource(dynamicDataSource());

sessionFactory.setMapperLocations(new PathMatchingResourcePatternResolver().getResources("classpath:mapper/*.xml"));

MybatisConfiguration configuration = new MybatisConfiguration();

configuration.setMapUnderscoreToCamelCase(true);

configuration.setCacheEnabled(false);

sessionFactory.setConfiguration(configuration);

GlobalConfig globalConfig = new GlobalConfig();

globalConfig.setBanner(false);

sessionFactory.setGlobalConfig(globalConfig);

return sessionFactory;

}

@Bean

public PlatformTransactionManager transactionManager() {

returnnew DataSourceTransactionManager(dynamicDataSource());

}

@Bean

public TransactionTemplate transactionTemplate(PlatformTransactionManager transactionManager) {

returnnew TransactionTemplate(transactionManager);

}

}

自定义数据源切换注解

package com.jam.demo.annotation;

import com.jam.demo.enums.DataSourceType;

import java.lang.annotation.*;

@Target({ElementType.METHOD, ElementType.TYPE})

@Retention(RetentionPolicy.RUNTIME)

@Documented

public @interface DataSourceSwitch {

DataSourceType value() default DataSourceType.MASTER;

}

数据源切换AOP切面

package com.jam.demo.aspect;

import com.jam.demo.annotation.DataSourceSwitch;

import com.jam.demo.config.DynamicDataSourceContextHolder;

import lombok.extern.slf4j.Slf4j;

import org.aspectj.lang.ProceedingJoinPoint;

import org.aspectj.lang.annotation.Around;

import org.aspectj.lang.annotation.Aspect;

import org.aspectj.lang.annotation.Pointcut;

import org.aspectj.lang.reflect.MethodSignature;

import org.springframework.core.annotation.Order;

import org.springframework.stereotype.Component;

import java.lang.reflect.Method;

@Slf4j

@Aspect

@Component

@Order(1)

publicclass DataSourceSwitchAspect {

@Pointcut("@annotation(com.jam.demo.annotation.DataSourceSwitch)")

public void dataSourceSwitchPointCut() {

}

@Around("dataSourceSwitchPointCut()")

public Object around(ProceedingJoinPoint joinPoint) throws Throwable {

MethodSignature signature = (MethodSignature) joinPoint.getSignature();

Method method = signature.getMethod();

DataSourceSwitch annotation = method.getAnnotation(DataSourceSwitch.class);

if (annotation != null) {

DynamicDataSourceContextHolder.setDataSourceType(annotation.value());

}

try {

return joinPoint.proceed();

} finally {

DynamicDataSourceContextHolder.clearDataSourceType();

}

}

}

实体类

package com.jam.demo.entity;

import com.baomidou.mybatisplus.annotation.IdType;

import com.baomidou.mybatisplus.annotation.TableId;

import com.baomidou.mybatisplus.annotation.TableName;

import io.swagger.v3.oas.annotations.media.Schema;

import lombok.Data;

import java.time.LocalDateTime;

@Data

@TableName("user_info")

@Schema(description = "用户信息实体")

publicclass UserInfo {

@TableId(type = IdType.AUTO)

@Schema(description = "用户ID", example = "1")

private Long id;

@Schema(description = "用户名", example = "testUser")

private String username;

@Schema(description = "年龄", example = "20")

private Integer age;

@Schema(description = "创建时间")

private LocalDateTime createTime;

}

Mapper接口

package com.jam.demo.mapper;

import com.baomidou.mybatisplus.core.mapper.BaseMapper;

import com.jam.demo.entity.UserInfo;

import org.apache.ibatis.annotations.Mapper;

@Mapper

public interface UserInfoMapper extends BaseMapper<UserInfo> {

}

Service接口与实现

package com.jam.demo.service;

import com.baomidou.mybatisplus.extension.service.IService;

import com.jam.demo.entity.UserInfo;

public interface UserInfoService extends IService<UserInfo> {

boolean createUser(UserInfo userInfo);

UserInfo getUserById(Long id);

}

package com.jam.demo.service.impl;

import com.baomidou.mybatisplus.extension.service.impl.ServiceImpl;

import com.jam.demo.annotation.DataSourceSwitch;

import com.jam.demo.entity.UserInfo;

import com.jam.demo.enums.DataSourceType;

import com.jam.demo.mapper.UserInfoMapper;

import com.jam.demo.service.UserInfoService;

import lombok.extern.slf4j.Slf4j;

import org.springframework.stereotype.Service;

import org.springframework.transaction.support.TransactionTemplate;

import org.springframework.util.ObjectUtils;

/**

* 用户信息服务实现类

* @author ken

*/

@Slf4j

@Service

publicclass UserInfoServiceImpl extends ServiceImpl<UserInfoMapper, UserInfo> implements UserInfoService {

privatefinal TransactionTemplate transactionTemplate;

public UserInfoServiceImpl(TransactionTemplate transactionTemplate) {

this.transactionTemplate = transactionTemplate;

}

@Override

@DataSourceSwitch(DataSourceType.MASTER)

public boolean createUser(UserInfo userInfo) {

if (ObjectUtils.isEmpty(userInfo)) {

thrownew IllegalArgumentException("用户信息不能为空");

}

Boolean result = transactionTemplate.execute(status -> {

try {

returnthis.save(userInfo);

} catch (Exception e) {

status.setRollbackOnly();

log.error("创建用户失败", e);

returnfalse;

}

});

return Boolean.TRUE.equals(result);

}

@Override

@DataSourceSwitch(DataSourceType.SLAVE)

public UserInfo getUserById(Long id) {

if (ObjectUtils.isEmpty(id) || id <= 0) {

thrownew IllegalArgumentException("用户ID不合法");

}

returnthis.getById(id);

}

}

Controller层

package com.jam.demo.controller;

import com.jam.demo.entity.UserInfo;

import com.jam.demo.service.UserInfoService;

import io.swagger.v3.oas.annotations.Operation;

import io.swagger.v3.oas.annotations.Parameter;

import io.swagger.v3.oas.annotations.tags.Tag;

import lombok.extern.slf4j.Slf4j;

import org.springframework.http.ResponseEntity;

import org.springframework.web.bind.annotation.*;

@Slf4j

@RestController

@RequestMapping("/user")

@Tag(name = "用户管理", description = "用户信息增删改查接口")

publicclass UserInfoController {

privatefinal UserInfoService userInfoService;

public UserInfoController(UserInfoService userInfoService) {

this.userInfoService = userInfoService;

}

@PostMapping

@Operation(summary = "创建用户", description = "新增用户信息,路由至主库执行")

public ResponseEntity<Boolean> createUser(@RequestBody UserInfo userInfo) {

return ResponseEntity.ok(userInfoService.createUser(userInfo));

}

@GetMapping("/{id}")

@Operation(summary = "查询用户", description = "根据用户ID查询信息,路由至从库执行")

public ResponseEntity<UserInfo> getUserById(

@Parameter(description = "用户ID", required = true) @PathVariable Long id) {

return ResponseEntity.ok(userInfoService.getUserById(id));

}

}

配置文件application.yml

spring:

application:

name:mysql-rw-split-demo

datasource:

master:

driver-class-name:com.mysql.cj.jdbc.Driver

url:jdbc:mysql://192.168.1.100:3306/demo_db?useUnicode=true&characterEncoding=utf8&useSSL=false&serverTimezone=Asia/Shanghai&allowPublicKeyRetrieval=true

username:root

password:Root@123456

initial-size:5

max-active:20

min-idle:5

max-wait:60000

slave:

driver-class-name:com.mysql.cj.jdbc.Driver

url:jdbc:mysql://192.168.1.101:3306/demo_db?useUnicode=true&characterEncoding=utf8&useSSL=false&serverTimezone=Asia/Shanghai&allowPublicKeyRetrieval=true

username:root

password:Root@123456

initial-size:5

max-active:20

min-idle:5

max-wait:60000

springdoc:

swagger-ui:

path:/swagger-ui.html

enabled:true

api-docs:

enabled:true

mybatis-plus:

mapper-locations:classpath:mapper/*.xml

type-aliases-package:com.jam.demo.entity

configuration:

map-underscore-to-camel-case:true

log-impl:org.apache.ibatis.logging.stdout.StdOutImpl

七、核心总结

MySQL主从复制的核心本质是基于binlog的日志同步与重放,理解全链路原理是解决所有主从问题的前提。三种复制模式的选型核心是在数据一致性与性能之间找到平衡,核心业务优先选择无损半同步复制,金融级强一致需求选择MGR组复制。

主从延迟的核心矛盾是主库的并行写入与从库的串行重放之间的性能差距,优化的核心思路是:从根源上减少不必要的binlog生成,最大化提升从库的重放并行度,降低重放环节的资源开销。生产环境中,90%的主从延迟问题都可以通过规范表结构(强制主键)、拆分大事务、开启并行复制这三个手段解决。

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2026-04-09,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录