入门:“通过可行的轻量环境,让小白探索AI内部,善莫大焉”

入门:“通过可行的轻量环境,让小白探索AI内部,善莫大焉”

烟雨平生

发布于 2026-04-14 18:36:45

发布于 2026-04-14 18:36:45

新设备训练一个可以回答问题的中文GPT小模型提速很多:平均200s。在19年的Mac Intel 2.6GHz 6c i7 16GB平均耗时1186.95s【19.78 分钟】。相同的任务,都是510条训练集90条验证集,用CPU训练2000步。

- 代码与完整说明(GitHub,含权重/LFS):https://github.com/helloworldtang/GPT_teacher-3.37M-cn

- 代码镜像(Gitee,权重外链): https://gitee.com/baidumap/GPT_teacher-3.37M-cn

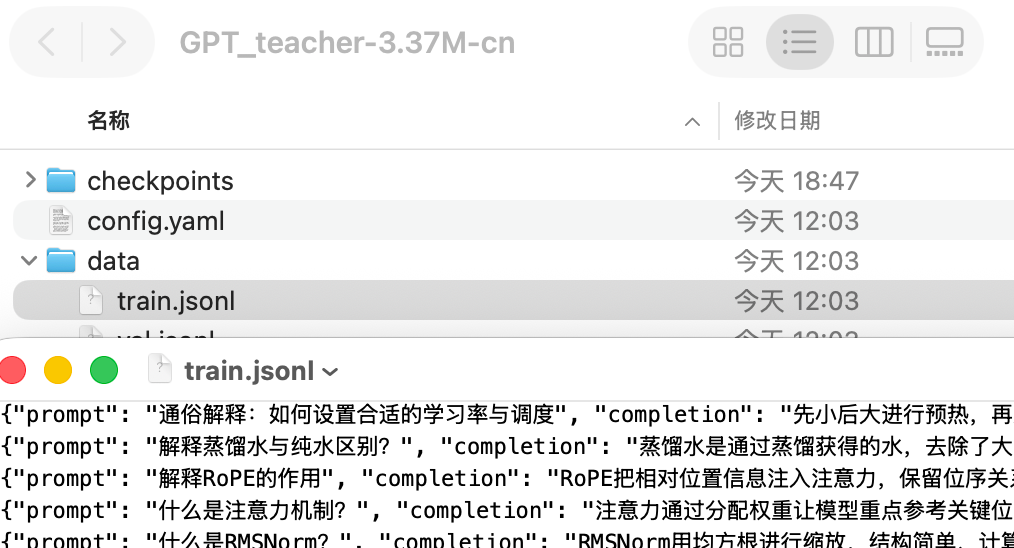

从0到1手搓484行代码,用普通CPU训练一个3.37M中文GPT模型,耗时不到20分钟,回答的效果很不错,欢迎各位老师检查作业

为什么做这个事:

用普通电脑训练中文GPT,然后带着问题学习,快速入门。感觉挺有意义的。既让自己加深了理解,也影响了喜欢AI的人。

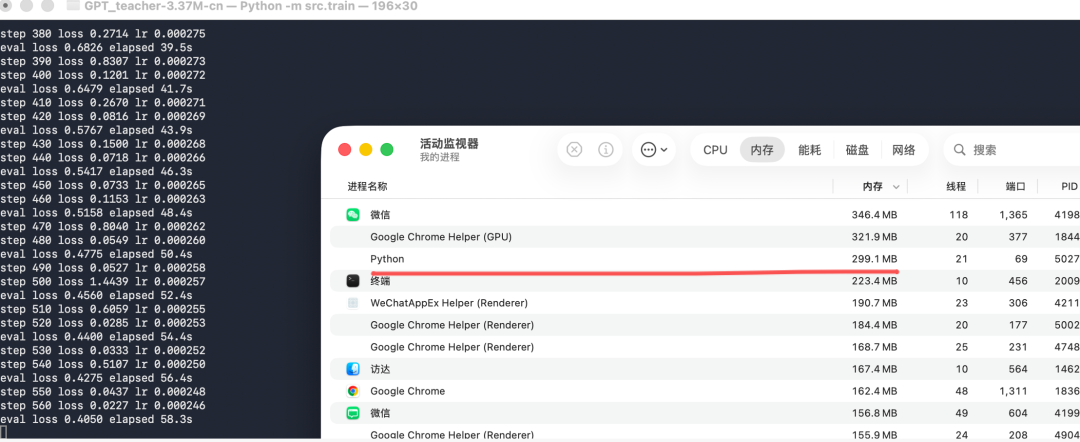

先看下训练过程中的资源消耗:

还是挺吃CPU的

内存只用了299M,不过

再一块重温下从0到1训练这个中文GPT SLM的过程:

第一步:下载项目代码。

直接下载,特别适合电脑下没有安装git:

点击下载ZIP,解压缩

如果电脑上安装有git:

%git clone https://gitee.com/baidumap/GPT_teacher-3.37M-cn.git

Cloning into 'GPT_teacher-3.37M-cn'...

remote: Enumerating objects: 36, done.

remote: Counting objects: 100% (36/36), done.

remote: Compressing objects: 100% (35/35), done.

remote: Total 36 (delta 8), reused 0 (delta 0), pack-reused 0 (from 0)

Receiving objects: 100% (36/36), 180.85 KiB | 1.26 MiB/s, done.

Resolving deltas: 100% (8/8), done.为啥不是https://github.com/helloworldtang/GPT_teacher-3.37M-cn?网不好,总是超时。

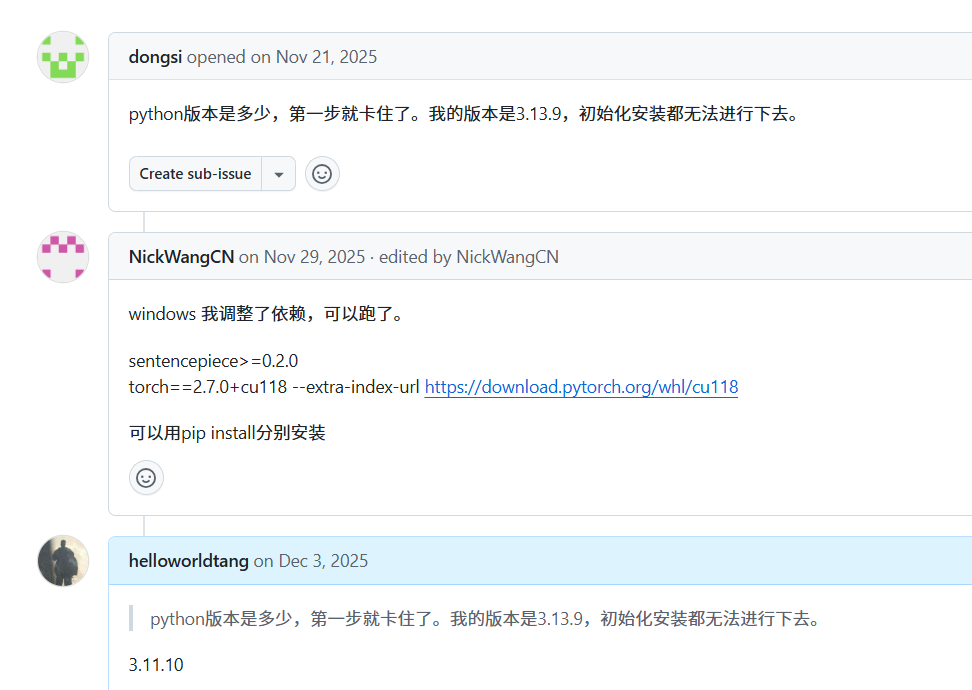

第二步:

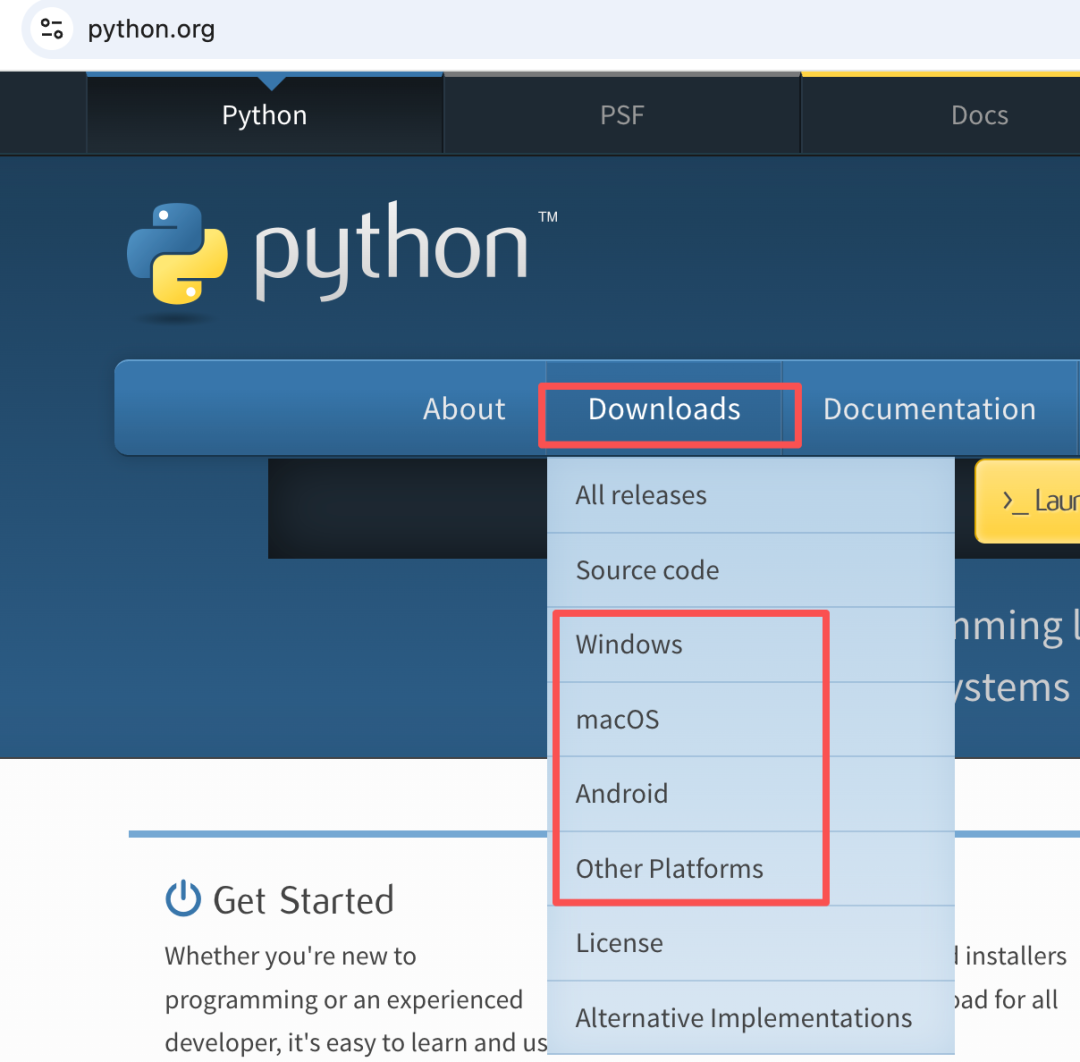

安装Python3。已安装则跳过。

Python版本:>3.10&&<3.12

previewImag

https://www.python.org/

下载好后,安装。Windows要选择将python安装到PATH

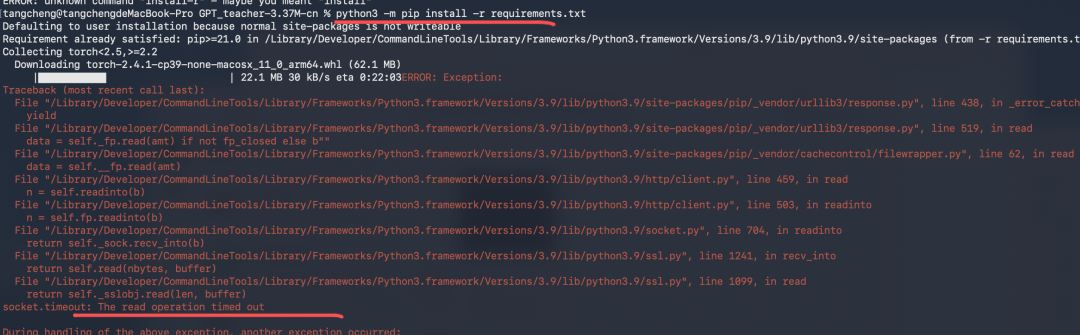

第三步:安装项目依赖

% python3 -m pip install -r requirements.txt -i https://pypi.tuna.tsinghua.edu.cn/simple

为什么要加“-i https://pypi.tuna.tsinghua.edu.cn/simple”?

因为网不好:

socket.timeout: The read operation timed out

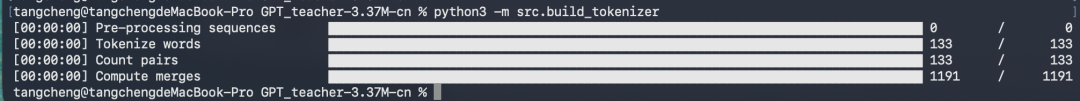

第四步:训练词表

% python3 -m src.build_tokenizer

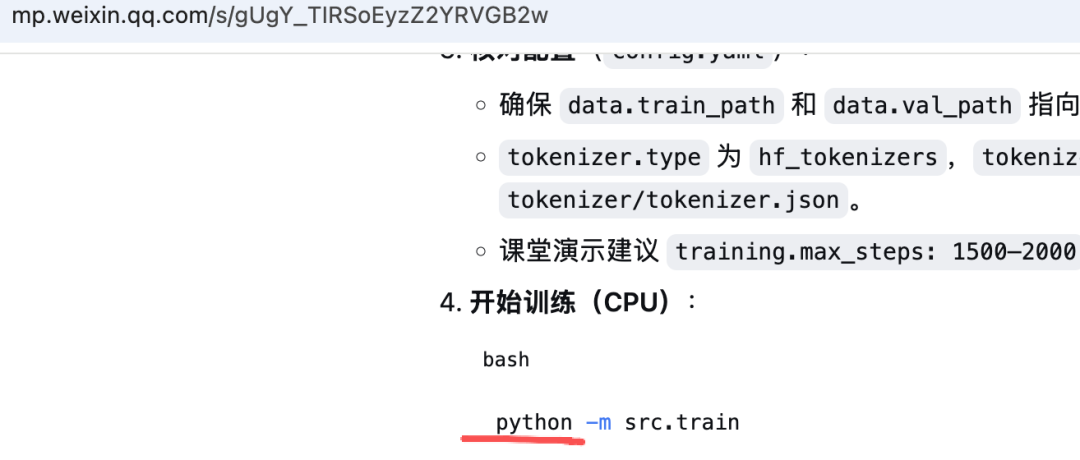

第五步:训练中文GPT小模型

% python3 -m src.train执行时的输出信息:

step 10 loss 12.0353 lr 0.000300

step 20 loss 9.5207 lr 0.000300

eval loss 9.0448 elapsed 1.9s

step 30 loss 5.8622 lr 0.000300

step 40 loss 3.8224 lr 0.000300

eval loss 4.3166 elapsed 4.1s

step 50 loss 3.5052 lr 0.000300

step 60 loss 3.5594 lr 0.000299

eval loss 3.6508 elapsed 6.1s

step 70 loss 4.5472 lr 0.000299

step 80 loss 3.1220 lr 0.000299

eval loss 3.2309 elapsed 8.0s

step 90 loss 4.1795 lr 0.000299

step 100 loss 2.6944 lr 0.000298

eval loss 2.7809 elapsed 10.0s

step 110 loss 2.4675 lr 0.000298

step 120 loss 2.2288 lr 0.000298

eval loss 2.1898 elapsed 11.9s

step 130 loss 2.0625 lr 0.000297

step 140 loss 1.3677 lr 0.000297

eval loss 1.8527 elapsed 13.9s

step 150 loss 1.0398 lr 0.000296

step 160 loss 1.7732 lr 0.000296

eval loss 1.6736 elapsed 15.9s

step 170 loss 1.2226 lr 0.000295

step 180 loss 1.0359 lr 0.000294

eval loss 1.5064 elapsed 17.8s

step 190 loss 0.9215 lr 0.000294

step 200 loss 0.9284 lr 0.000293

eval loss 1.3911 elapsed 19.6s

step 210 loss 0.8487 lr 0.000292

step 220 loss 2.5107 lr 0.000291

eval loss 1.2488 elapsed 21.7s

step 230 loss 2.0541 lr 0.000291

step 240 loss 0.6507 lr 0.000290

eval loss 1.1482 elapsed 23.8s

step 250 loss 0.5971 lr 0.000289

step 260 loss 0.5533 lr 0.000288

eval loss 1.0726 elapsed 25.8s

step 270 loss 0.5624 lr 0.000287

step 280 loss 1.2556 lr 0.000286

eval loss 1.0184 elapsed 27.9s

step 290 loss 0.5032 lr 0.000285

step 300 loss 1.4470 lr 0.000284

eval loss 0.9649 elapsed 30.0s

step 310 loss 0.4949 lr 0.000283

step 320 loss 0.2853 lr 0.000282

eval loss 0.8938 elapsed 32.4s

step 330 loss 0.4014 lr 0.000281

step 340 loss 3.0781 lr 0.000280

eval loss 0.8274 elapsed 34.6s

step 350 loss 0.3866 lr 0.000278

step 360 loss 0.3597 lr 0.000277

eval loss 0.7545 elapsed 37.2s

step 370 loss 0.2462 lr 0.000276

step 380 loss 0.2714 lr 0.000275

eval loss 0.6826 elapsed 39.5s

step 390 loss 0.8307 lr 0.000273

step 400 loss 0.1201 lr 0.000272

eval loss 0.6479 elapsed 41.7s

step 410 loss 0.2670 lr 0.000271

step 420 loss 0.0816 lr 0.000269

eval loss 0.5767 elapsed 43.9s

step 430 loss 0.1500 lr 0.000268

step 440 loss 0.0718 lr 0.000266

eval loss 0.5417 elapsed 46.3s

step 450 loss 0.0733 lr 0.000265

step 460 loss 0.1153 lr 0.000263

eval loss 0.5158 elapsed 48.4s

step 470 loss 0.8040 lr 0.000262

step 480 loss 0.0549 lr 0.000260

eval loss 0.4775 elapsed 50.4s

step 490 loss 0.0527 lr 0.000258

step 500 loss 1.4439 lr 0.000257

eval loss 0.4560 elapsed 52.4s

step 510 loss 0.6059 lr 0.000255

step 520 loss 0.0285 lr 0.000253

eval loss 0.4400 elapsed 54.4s

step 530 loss 0.0333 lr 0.000252

step 540 loss 0.5107 lr 0.000250

eval loss 0.4275 elapsed 56.4s

step 550 loss 0.0437 lr 0.000248

step 560 loss 0.0227 lr 0.000246

eval loss 0.4050 elapsed 58.3s

step 570 loss 0.0288 lr 0.000244

step 580 loss 0.2812 lr 0.000243

eval loss 0.3982 elapsed 60.7s

step 590 loss 0.0126 lr 0.000241

step 600 loss 0.8658 lr 0.000239

eval loss 0.3924 elapsed 63.4s

step 610 loss 0.1584 lr 0.000237

step 620 loss 0.2807 lr 0.000235

eval loss 0.3729 elapsed 65.7s

step 630 loss 0.0235 lr 0.000233

step 640 loss 1.1161 lr 0.000231

eval loss 0.3604 elapsed 67.8s

step 650 loss 0.0143 lr 0.000229

step 660 loss 0.0151 lr 0.000227

eval loss 0.3478 elapsed 70.0s

step 670 loss 0.0171 lr 0.000225

step 680 loss 0.0137 lr 0.000223

eval loss 0.3511 elapsed 72.3s

step 690 loss 0.3127 lr 0.000221

step 700 loss 0.0096 lr 0.000219

eval loss 0.3422 elapsed 74.4s

step 710 loss 0.0126 lr 0.000217

step 720 loss 0.2959 lr 0.000215

eval loss 0.3358 elapsed 76.4s

step 730 loss 0.0122 lr 0.000212

step 740 loss 0.0106 lr 0.000210

eval loss 0.3169 elapsed 78.4s

step 750 loss 0.5252 lr 0.000208

step 760 loss 0.0179 lr 0.000206

eval loss 0.3274 elapsed 80.4s

step 770 loss 0.2152 lr 0.000204

step 780 loss 0.0107 lr 0.000201

eval loss 0.3032 elapsed 82.4s

step 790 loss 0.0103 lr 0.000199

step 800 loss 0.0091 lr 0.000197

eval loss 0.2986 elapsed 84.5s

step 810 loss 0.0190 lr 0.000195

step 820 loss 0.0098 lr 0.000193

eval loss 0.2857 elapsed 86.4s

step 830 loss 0.0086 lr 0.000190

step 840 loss 0.0111 lr 0.000188

eval loss 0.2777 elapsed 88.3s

step 850 loss 0.0094 lr 0.000186

step 860 loss 0.0106 lr 0.000183

eval loss 0.2745 elapsed 90.3s

step 870 loss 0.5308 lr 0.000181

step 880 loss 0.0119 lr 0.000179

eval loss 0.2674 elapsed 92.5s

step 890 loss 0.0089 lr 0.000176

step 900 loss 0.8492 lr 0.000174

eval loss 0.2572 elapsed 94.3s

step 910 loss 0.0075 lr 0.000172

step 920 loss 0.1075 lr 0.000169

eval loss 0.2564 elapsed 96.2s

step 930 loss 0.1071 lr 0.000167

step 940 loss 0.0992 lr 0.000165

eval loss 0.2433 elapsed 98.1s

step 950 loss 0.2893 lr 0.000162

step 960 loss 0.0066 lr 0.000160

eval loss 0.2387 elapsed 100.0s

step 970 loss 0.0121 lr 0.000158

step 980 loss 0.0161 lr 0.000155

eval loss 0.2432 elapsed 101.9s

step 990 loss 0.0053 lr 0.000153

step 1000 loss 0.0069 lr 0.000151

eval loss 0.2295 elapsed 103.7s

step 1010 loss 0.5471 lr 0.000148

step 1020 loss 1.6835 lr 0.000146

eval loss 0.2236 elapsed 105.6s

step 1030 loss 0.0051 lr 0.000144

step 1040 loss 0.0075 lr 0.000141

eval loss 0.2184 elapsed 107.5s

step 1050 loss 0.0060 lr 0.000139

step 1060 loss 0.0075 lr 0.000136

eval loss 0.2122 elapsed 109.4s

step 1070 loss 0.0049 lr 0.000134

step 1080 loss 0.0060 lr 0.000132

eval loss 0.2113 elapsed 111.2s

step 1090 loss 0.0061 lr 0.000129

step 1100 loss 0.0788 lr 0.000127

eval loss 0.2041 elapsed 113.1s

step 1110 loss 0.0045 lr 0.000125

step 1120 loss 0.0042 lr 0.000122

eval loss 0.2089 elapsed 115.1s

step 1130 loss 0.4997 lr 0.000120

step 1140 loss 0.1433 lr 0.000118

eval loss 0.1985 elapsed 117.0s

step 1150 loss 0.0071 lr 0.000115

step 1160 loss 0.0047 lr 0.000113

eval loss 0.1947 elapsed 118.9s

step 1170 loss 0.0067 lr 0.000111

step 1180 loss 0.0062 lr 0.000109

eval loss 0.1868 elapsed 120.8s

step 1190 loss 0.0048 lr 0.000106

step 1200 loss 0.0224 lr 0.000104

eval loss 0.1968 elapsed 122.7s

step 1210 loss 0.0058 lr 0.000102

step 1220 loss 0.3892 lr 0.000100

eval loss 0.1877 elapsed 124.7s

step 1230 loss 0.0063 lr 0.000097

step 1240 loss 0.0062 lr 0.000095

eval loss 0.1849 elapsed 126.7s

step 1250 loss 0.0059 lr 0.000093

step 1260 loss 0.4280 lr 0.000091

eval loss 0.1794 elapsed 128.6s

step 1270 loss 0.0357 lr 0.000089

step 1280 loss 0.0046 lr 0.000087

eval loss 0.1766 elapsed 130.5s

step 1290 loss 1.4890 lr 0.000084

step 1300 loss 0.0070 lr 0.000082

eval loss 0.1749 elapsed 132.3s

step 1310 loss 0.0041 lr 0.000080

step 1320 loss 0.0059 lr 0.000078

eval loss 0.1805 elapsed 134.2s

step 1330 loss 0.0040 lr 0.000076

step 1340 loss 0.4127 lr 0.000074

eval loss 0.1704 elapsed 136.2s

step 1350 loss 0.0044 lr 0.000072

step 1360 loss 0.0040 lr 0.000070

eval loss 0.1712 elapsed 138.3s

step 1370 loss 0.1169 lr 0.000068

step 1380 loss 0.3378 lr 0.000066

eval loss 0.1662 elapsed 140.4s

step 1390 loss 0.0061 lr 0.000064

step 1400 loss 0.0038 lr 0.000062

eval loss 0.1637 elapsed 142.5s

step 1410 loss 0.0388 lr 0.000060

step 1420 loss 0.0066 lr 0.000058

eval loss 0.1613 elapsed 144.9s

step 1430 loss 0.0304 lr 0.000056

step 1440 loss 0.6053 lr 0.000055

eval loss 0.1584 elapsed 147.1s

step 1450 loss 0.0052 lr 0.000053

step 1460 loss 0.0037 lr 0.000051

eval loss 0.1549 elapsed 149.0s

step 1470 loss 0.0040 lr 0.000049

step 1480 loss 0.0038 lr 0.000048

eval loss 0.1538 elapsed 151.0s

step 1490 loss 0.0102 lr 0.000046

step 1500 loss 0.0052 lr 0.000044

eval loss 0.1534 elapsed 153.0s

step 1510 loss 0.0053 lr 0.000042

step 1520 loss 0.0068 lr 0.000041

eval loss 0.1533 elapsed 154.9s

step 1530 loss 0.0042 lr 0.000039

step 1540 loss 0.0053 lr 0.000038

eval loss 0.1500 elapsed 157.0s

step 1550 loss 0.0037 lr 0.000036

step 1560 loss 0.2401 lr 0.000035

eval loss 0.1493 elapsed 159.1s

step 1570 loss 0.0051 lr 0.000033

step 1580 loss 0.0083 lr 0.000032

eval loss 0.1500 elapsed 161.4s

step 1590 loss 0.0033 lr 0.000030

step 1600 loss 0.0068 lr 0.000029

eval loss 0.1474 elapsed 163.5s

step 1610 loss 0.3486 lr 0.000027

step 1620 loss 0.0035 lr 0.000026

eval loss 0.1465 elapsed 165.6s

step 1630 loss 0.0052 lr 0.000025

step 1640 loss 0.0054 lr 0.000023

eval loss 0.1467 elapsed 167.6s

step 1650 loss 0.0037 lr 0.000022

step 1660 loss 0.0035 lr 0.000021

eval loss 0.1448 elapsed 169.5s

step 1670 loss 0.0036 lr 0.000020

step 1680 loss 0.0168 lr 0.000019

eval loss 0.1447 elapsed 171.5s

step 1690 loss 0.0449 lr 0.000018

step 1700 loss 0.0072 lr 0.000016

eval loss 0.1427 elapsed 173.7s

step 1710 loss 0.0035 lr 0.000015

step 1720 loss 0.0035 lr 0.000014

eval loss 0.1428 elapsed 176.0s

step 1730 loss 0.0316 lr 0.000013

step 1740 loss 0.0212 lr 0.000012

eval loss 0.1418 elapsed 178.0s

step 1750 loss 0.2938 lr 0.000011

step 1760 loss 0.0247 lr 0.000011

eval loss 0.1420 elapsed 179.8s

step 1770 loss 0.0037 lr 0.000010

step 1780 loss 0.0052 lr 0.000009

eval loss 0.1417 elapsed 181.6s

step 1790 loss 0.0049 lr 0.000008

step 1800 loss 0.0032 lr 0.000007

eval loss 0.1411 elapsed 183.5s

step 1810 loss 0.0034 lr 0.000007

step 1820 loss 0.0125 lr 0.000006

eval loss 0.1412 elapsed 185.4s

step 1830 loss 0.0479 lr 0.000005

step 1840 loss 0.0037 lr 0.000005

eval loss 0.1412 elapsed 187.2s

step 1850 loss 0.0033 lr 0.000004

step 1860 loss 0.0035 lr 0.000004

eval loss 0.1407 elapsed 189.1s

step 1870 loss 0.0489 lr 0.000003

step 1880 loss 0.2369 lr 0.000003

eval loss 0.1407 elapsed 190.9s

step 1890 loss 0.0257 lr 0.000002

step 1900 loss 0.0038 lr 0.000002

eval loss 0.1406 elapsed 192.8s

step 1910 loss 0.0037 lr 0.000002

step 1920 loss 0.0036 lr 0.000001

eval loss 0.1406 elapsed 194.6s

step 1930 loss 0.0054 lr 0.000001

step 1940 loss 0.0050 lr 0.000001

eval loss 0.1405 elapsed 196.4s

step 1950 loss 0.0325 lr 0.000000

step 1960 loss 0.0054 lr 0.000000

eval loss 0.1405 elapsed 198.3s

step 1970 loss 0.0034 lr 0.000000

step 1980 loss 0.0032 lr 0.000000

eval loss 0.1405 elapsed 200.1s

step 1990 loss 0.0038 lr 0.000000

step 2000 loss 0.0032 lr 0.000000

eval loss 0.1405 elapsed 201.9s耗时:201.9s

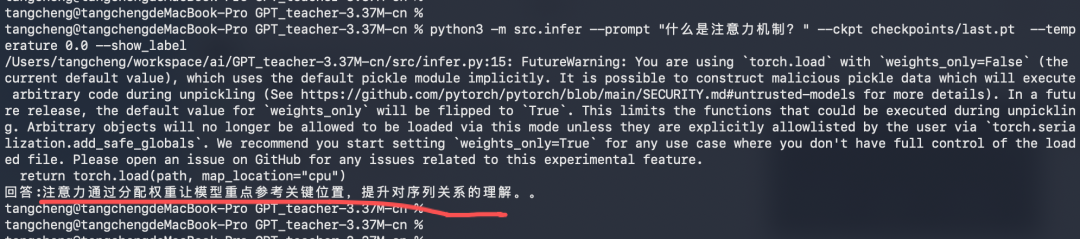

第六步:检测训练中文GPT小模型的效果

% python3 -m src.infer --prompt "什么是注意力机制?" --ckpt checkpoints/last.pt --temperature 0.0 --show_label输出的回答:

大模型给的答案与问题是相关的、是有效的

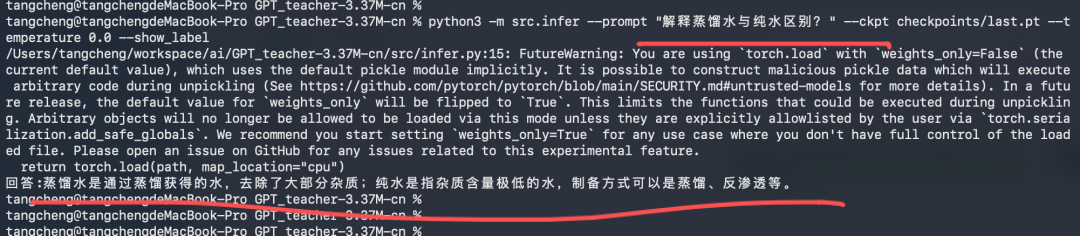

% python3 -m src.infer --prompt "解释蒸馏水与纯水区别?" --ckpt checkpoints/last.pt --temperature 0.0 --show_label输出的回答:

大模型给的答案与问题是相关的、是有效的

QA:

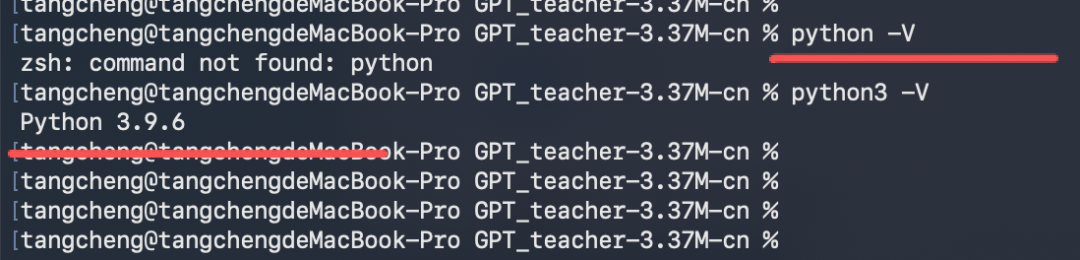

1、上面用的为什么用的是python3?看之前的文章用中用的是python

因为最近辞职了,公司借给的生产资料【电脑】已经还了。

买的新电脑中目前只有python3这个:

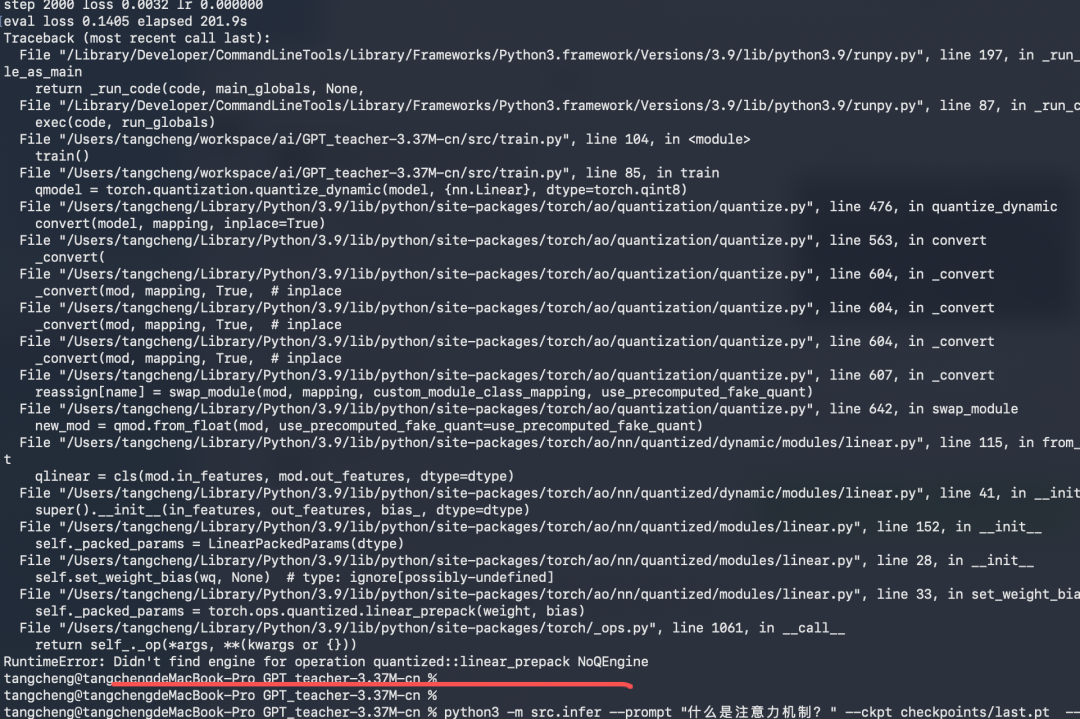

2、量化训练得到的模型时报错了

RuntimeError: Didn't find engine for operation quantized::linear_prepack NoQEngine

这个错误的核心原因是:

- PyTorch 的量化功能需要依赖特定的量化引擎(如 fbgemm、qnnpack)

- 你的环境中没有正确配置或启用量化引擎,导致量化线性层打包参数的操作无法执行。

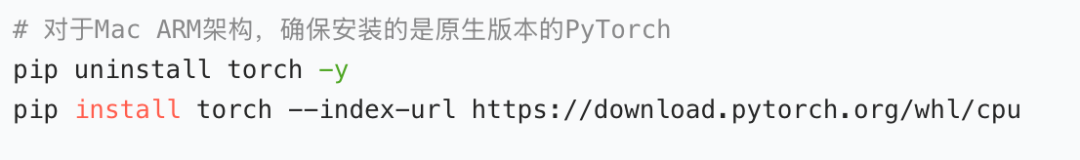

- 这在 MacOS 系统上尤为常见,因为默认的 fbgemm 引擎主要针对 x86 架构优化,而 Mac 的 ARM 架构(M 系列芯片)需要使用 qnnpack

怎么办?

要增加对这个场景的支持。

还需要安装依赖:

这个点不影响我们理解训练的事。先忽略。

3、每次验收训练结果时,为什么都是这两个问题:

"什么是注意力机制?"

"解释蒸馏水与纯水区别?"答:因为只教了这个中文GPT这些知识

训练数据集主要就是这两个知识。感兴趣的可以看一下

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2026-01-09,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读